24 September 2021 to 23 September 2009 · tagged essay/tech

¶ A short essay about long playlists of short tracks of rain noises and streaming-music economics. · 24 September 2021 essay/tech

Rolling Stone published this recent story (https://www.rollingstone.com/pro/features/spotify-sleep-music-playlists-lady-gaga-1223911/) about the streaming success of the sleep-noise artist/label/scheme Sleep Fruits, who chop up background rain-noise recordings into :30 lengths to maximize streaming playcounts.

Sleep Fruits is undeniably and intentionally exploiting the systemic weakness of the industry-wide :30-or-more-is-a-play rule, as too are audiobook licensors who split their long content into :30 "chapters". The :30 thing is a bad rule. Most of the straightforward alternatives are also bad, so it wasn't an obviously insane initial system design-choice, but this abuse vector is logical and inevitable.

The effect of the abuse for the label doing it is simple: exploitative multiplication of their "natural" streams by a large factor. x6 if you compare it to rain noise sliced into pop-song-size lengths.

The effect on the rest of the streaming economy is more complicated. More money to Sleep Fruits does mean less money to somebody else, at least in the short term.

Under the current pro-rata royalty-allocation system used by all major subscription streaming services (one big pool, split by stream-share), the effects of Sleep Fruits' abuse are distributed across the whole subscription pool. The burden is shared by all other artists, collectively, but is fractional and negligible for any individual artist. In addition, under pro-rata if an individual listener plays Sleep Fruits overnight, every night, it doesn't change the value of their "real" music-listening activity during the day. Those artists get the same benefit from those fans as they would from a listener who sleeps in silence.

Under the oft-proposed user-centric payment system, in which each listener's payments are split according to only their plays, Sleep Fruits' short-track abuse tactic would be less effective for them. Not as much less effective as you might think, because the same two things that inflate their overall numbers (long-duration background playing + short tracks) inflate their share of each listener's plays. But less, because in the pro-rata model one listener can direct more revenue than they contributed, and in the user-centric model they can't.

In the user-centric model, though, if an individual listener listens to Sleep Fruits overnight, that directly reduces the money that goes to their daytime artists. Where pro-rata disperses the burden, user-centric would concentrate it on the kinds of artists whose fans also listen to background noise. This is probably worse in overall fairness, and it's definitely worse in terms of the listener-artist relationship, which is one of the key emotional arguments for the user-centric model.

The interesting additional economic twist to this particular case, though, is that sleeping to background noise works very badly if it's interrupted by ads. Background listening is thus a powerful incentive for paid subscriptions over ad-supported streaming. (Audiobooks similarly, since they essentially require full on-demand listening control.) So if Sleep Fruits drives background listeners to subscribe, it might be bringing in additional money that could offset or even exceed the amount extracted by its abuse. (Maybe. The counterfactual here is hard to assess quantitatively.)

And although the :30 rule is what made this example newsworthy in its exaggerated effect, in truth it's probably not really the fundamental problem. The deeper issue is just that we subjectively value music based on the attention we pay to it, but we haven't figured out a good way to translate between attention paid and money paid. Switching from play-share to time-share would eliminate the advantage of cutting up rain noise into :30 lengths, but wouldn't change the imbalance between 8 hours/night of sleep loops and 1-2 hours/day of music listening. CDs "solved" this by making you pay for your expected attention with a high fixed entry price, which isn't really any more sensible.

I don't think we're going to solve this with just math, which disappoints me personally, since I'm pretty good at solving math-solvable things with math. But in general I think time-share is a slightly closer approximation of attention-share than play-share, and thus preferable. And rather than trying to discount low-attention listening, which seems problematic and thankless and negative, it seems more practical and appealing to me to try to add incremental additional rewards to high-attention fandom. E.g. higher-cost subscription plans in which the extra money goes directly to artists of the listener's choice, in the form of microfanclubs supported by platform-provided community features. There are a lot of people who, like me, used to spend a lot more than $10/month on music, and could probably be convinced to spend more than that again if there were reasons.

Of course, not coincidentally, I have ideas about community features that can be provided with math. Lots of ideas. They come to me every :30 while I sleep.

PS: I've seen some speculation that Sleep Fruits is buying their streams. I'm involved enough in fraud-detection at Spotify to say with at least a little bit of confidence that this is probably not the case. Large-scale fraud is pretty easy to detect, and the scale of this is large. It's abusing a systemic weakness, but not obviously dishonestly.

Sleep Fruits is undeniably and intentionally exploiting the systemic weakness of the industry-wide :30-or-more-is-a-play rule, as too are audiobook licensors who split their long content into :30 "chapters". The :30 thing is a bad rule. Most of the straightforward alternatives are also bad, so it wasn't an obviously insane initial system design-choice, but this abuse vector is logical and inevitable.

The effect of the abuse for the label doing it is simple: exploitative multiplication of their "natural" streams by a large factor. x6 if you compare it to rain noise sliced into pop-song-size lengths.

The effect on the rest of the streaming economy is more complicated. More money to Sleep Fruits does mean less money to somebody else, at least in the short term.

Under the current pro-rata royalty-allocation system used by all major subscription streaming services (one big pool, split by stream-share), the effects of Sleep Fruits' abuse are distributed across the whole subscription pool. The burden is shared by all other artists, collectively, but is fractional and negligible for any individual artist. In addition, under pro-rata if an individual listener plays Sleep Fruits overnight, every night, it doesn't change the value of their "real" music-listening activity during the day. Those artists get the same benefit from those fans as they would from a listener who sleeps in silence.

Under the oft-proposed user-centric payment system, in which each listener's payments are split according to only their plays, Sleep Fruits' short-track abuse tactic would be less effective for them. Not as much less effective as you might think, because the same two things that inflate their overall numbers (long-duration background playing + short tracks) inflate their share of each listener's plays. But less, because in the pro-rata model one listener can direct more revenue than they contributed, and in the user-centric model they can't.

In the user-centric model, though, if an individual listener listens to Sleep Fruits overnight, that directly reduces the money that goes to their daytime artists. Where pro-rata disperses the burden, user-centric would concentrate it on the kinds of artists whose fans also listen to background noise. This is probably worse in overall fairness, and it's definitely worse in terms of the listener-artist relationship, which is one of the key emotional arguments for the user-centric model.

The interesting additional economic twist to this particular case, though, is that sleeping to background noise works very badly if it's interrupted by ads. Background listening is thus a powerful incentive for paid subscriptions over ad-supported streaming. (Audiobooks similarly, since they essentially require full on-demand listening control.) So if Sleep Fruits drives background listeners to subscribe, it might be bringing in additional money that could offset or even exceed the amount extracted by its abuse. (Maybe. The counterfactual here is hard to assess quantitatively.)

And although the :30 rule is what made this example newsworthy in its exaggerated effect, in truth it's probably not really the fundamental problem. The deeper issue is just that we subjectively value music based on the attention we pay to it, but we haven't figured out a good way to translate between attention paid and money paid. Switching from play-share to time-share would eliminate the advantage of cutting up rain noise into :30 lengths, but wouldn't change the imbalance between 8 hours/night of sleep loops and 1-2 hours/day of music listening. CDs "solved" this by making you pay for your expected attention with a high fixed entry price, which isn't really any more sensible.

I don't think we're going to solve this with just math, which disappoints me personally, since I'm pretty good at solving math-solvable things with math. But in general I think time-share is a slightly closer approximation of attention-share than play-share, and thus preferable. And rather than trying to discount low-attention listening, which seems problematic and thankless and negative, it seems more practical and appealing to me to try to add incremental additional rewards to high-attention fandom. E.g. higher-cost subscription plans in which the extra money goes directly to artists of the listener's choice, in the form of microfanclubs supported by platform-provided community features. There are a lot of people who, like me, used to spend a lot more than $10/month on music, and could probably be convinced to spend more than that again if there were reasons.

Of course, not coincidentally, I have ideas about community features that can be provided with math. Lots of ideas. They come to me every :30 while I sleep.

PS: I've seen some speculation that Sleep Fruits is buying their streams. I'm involved enough in fraud-detection at Spotify to say with at least a little bit of confidence that this is probably not the case. Large-scale fraud is pretty easy to detect, and the scale of this is large. It's abusing a systemic weakness, but not obviously dishonestly.

¶ 2019 in Music · 6 January 2020 essay/listen/tech

I starting making one music-list a year some time in the 80s, before I really knew enough for there to be any sense to this activity. For a while in the 90s and 00s I got more serious about it, and statistically way better-informed, but there's actually no amount of informedness that transforms a single person's opinions about music into anything that inherently matters to anybody other than people (if any) who happen to share their specific tastes, or extraordinarily patient and maybe slightly creepy friends.

Collect people together, though, and the patterns of their love are sometimes very interesting. For several years I presided computationally over an assembly of nominal expertise, trying to find ways to turn hundreds of opinions into at least plural insights. Hundreds of people is not a lot, though, and asking people to pretend their opinions matter is a dubious way to find out what they really love. I'm not really sad we stopped doing that.

Hundreds of millions of people isn't that much, yet, but it's getting there, and asking people to spend their lives loving all the innumerable things they love is a more realistic proposition than getting them to make short numbered lists on annual deadlines. Finding an individual person who shares your exact taste, in the real world, is not only laborious to the point of preventative difficulty, but maybe not even reliably possible in theory. Finding groups of people in the virtual world who collectively approximate aspects of your taste is, due to the primitive current state of data-transparency, no easier for you.

But it has been my job, for the last few years, to try to figure out algorithmic ways to turn collective love and listening patterns into music insights and experiences. I work at Spotify, so I have extremely good information about what people like in Sweden and Norway, fairly decent information about most of the rest of Europe, the Americas and parts of Asia, and at least glimmers of insight about literally almost everywhere else on Earth. I don't know that much about you, but I know a little bit about a lot of people.

So now I make a lot of lists. Here, in fact, are algorithmically-generated playlists of the songs that defined, united and distinguished the fans and love and new music in 2000+ genres and countries around the world in 2019:

2019 Around the World

You probably don't share my tastes, and this is a pretty weak unifying force for everybody who isn't me, but there are so many stronger ones. Maybe some of the ones that pull on you are represented here. Maybe some of the communities implied and channeled here have been unknowingly incomplete without you. Maybe you have not yet discovered half of the things you will eventually adore. Maybe this is how you find them.

I found a lot of things this year, myself, some of them in this process of trying to find music for other people, and some of them just by listening. You needn't care about what I like. And if for some reason you do, you can already find out what it is in unmanageable weekly detail. But I like to look back at my own years. Spotify's official forms of nostalgia so far define years purely by listening dates, but as a music geek of a particular sort, what I mean by a year is music that was both made and heard then. New music.

I no longer want to make this list by applying manual reductive retroactive impressions to what I remember of the year, but I also don't have to. Adapting my collective engines to the individual, then, here is the purely data-generated playlist of the new music to which I demonstrated the most actual listening attachment in 2019:

2019 Greatest Hits (for glenn mcdonald)

And for segmented nostalgia, because that's what kind of nostalgist I am, I also used genre metadata and a very small amount of manual tweaking to almost automatically produce three more specialized lists:

Bright Swords in the Void (Metal and metal-adjacent noises, from the floridly melodic to the stochastically apocalyptic.)

Gradient Dissent (Ambient, noise, epicore and other abstract geometries.)

Dancing With Tears (Pop, rock, hip hop and other sentimental forms.)

And finally, although surely this, if anything, will be of interest to absolutely nobody but me, I also used a combination of my own listening, broken down by genre, and the global 2019 genre lists, to produce a list of the songs I missed or intentionally avoided despite their being popular with the fans of my favorite genres.

2019 Greatest Misses (for glenn mcdonald)

I made versions of this Misses list in November and December, to see what I was in danger of missing before the year actually ended, so these songs are the reverse-evolutionary survivors of two generations of augmented remedial listening. But I played it again just now, and it still sounds basically great to me. I'm pretty sure I could spend the next year listening to nothing but songs I missed in 2019 despite trying to hear them all, and it would be just as great in sonic terms. There's something hypothetically comforting in that, at least until I starting trying to figure out what kind of global catastrophe I'm effectively imagining here. I'm alive, but all the musicians in the world are dead? Or there's no surviving technology for recording music, but somehow Spotify servers and the worldwide cell and wifi networks still work?

Easier to live. I now declare 2019 complete and archived. Onwards.

Collect people together, though, and the patterns of their love are sometimes very interesting. For several years I presided computationally over an assembly of nominal expertise, trying to find ways to turn hundreds of opinions into at least plural insights. Hundreds of people is not a lot, though, and asking people to pretend their opinions matter is a dubious way to find out what they really love. I'm not really sad we stopped doing that.

Hundreds of millions of people isn't that much, yet, but it's getting there, and asking people to spend their lives loving all the innumerable things they love is a more realistic proposition than getting them to make short numbered lists on annual deadlines. Finding an individual person who shares your exact taste, in the real world, is not only laborious to the point of preventative difficulty, but maybe not even reliably possible in theory. Finding groups of people in the virtual world who collectively approximate aspects of your taste is, due to the primitive current state of data-transparency, no easier for you.

But it has been my job, for the last few years, to try to figure out algorithmic ways to turn collective love and listening patterns into music insights and experiences. I work at Spotify, so I have extremely good information about what people like in Sweden and Norway, fairly decent information about most of the rest of Europe, the Americas and parts of Asia, and at least glimmers of insight about literally almost everywhere else on Earth. I don't know that much about you, but I know a little bit about a lot of people.

So now I make a lot of lists. Here, in fact, are algorithmically-generated playlists of the songs that defined, united and distinguished the fans and love and new music in 2000+ genres and countries around the world in 2019:

2019 Around the World

You probably don't share my tastes, and this is a pretty weak unifying force for everybody who isn't me, but there are so many stronger ones. Maybe some of the ones that pull on you are represented here. Maybe some of the communities implied and channeled here have been unknowingly incomplete without you. Maybe you have not yet discovered half of the things you will eventually adore. Maybe this is how you find them.

I found a lot of things this year, myself, some of them in this process of trying to find music for other people, and some of them just by listening. You needn't care about what I like. And if for some reason you do, you can already find out what it is in unmanageable weekly detail. But I like to look back at my own years. Spotify's official forms of nostalgia so far define years purely by listening dates, but as a music geek of a particular sort, what I mean by a year is music that was both made and heard then. New music.

I no longer want to make this list by applying manual reductive retroactive impressions to what I remember of the year, but I also don't have to. Adapting my collective engines to the individual, then, here is the purely data-generated playlist of the new music to which I demonstrated the most actual listening attachment in 2019:

2019 Greatest Hits (for glenn mcdonald)

And for segmented nostalgia, because that's what kind of nostalgist I am, I also used genre metadata and a very small amount of manual tweaking to almost automatically produce three more specialized lists:

Bright Swords in the Void (Metal and metal-adjacent noises, from the floridly melodic to the stochastically apocalyptic.)

Gradient Dissent (Ambient, noise, epicore and other abstract geometries.)

Dancing With Tears (Pop, rock, hip hop and other sentimental forms.)

And finally, although surely this, if anything, will be of interest to absolutely nobody but me, I also used a combination of my own listening, broken down by genre, and the global 2019 genre lists, to produce a list of the songs I missed or intentionally avoided despite their being popular with the fans of my favorite genres.

2019 Greatest Misses (for glenn mcdonald)

I made versions of this Misses list in November and December, to see what I was in danger of missing before the year actually ended, so these songs are the reverse-evolutionary survivors of two generations of augmented remedial listening. But I played it again just now, and it still sounds basically great to me. I'm pretty sure I could spend the next year listening to nothing but songs I missed in 2019 despite trying to hear them all, and it would be just as great in sonic terms. There's something hypothetically comforting in that, at least until I starting trying to figure out what kind of global catastrophe I'm effectively imagining here. I'm alive, but all the musicians in the world are dead? Or there's no surviving technology for recording music, but somehow Spotify servers and the worldwide cell and wifi networks still work?

Easier to live. I now declare 2019 complete and archived. Onwards.

¶ If You Do That, the Robots Win · 16 April 2016 essay/listen/tech

[This is the script from a talk I delivered at the EMP Pop Conference today. It was written to be read aloud at an intentionally headlong pace, with somewhat-carefully timed blasts of interstitial music. I've included playable clip-links for the songs here, but the clips are usually from the middles of the songs, and I was playing the beginnings of them in the talk, so it's different. The whole playlist is here, although playing it as a standalone thing would make no sense at all.]

I used to take software jobs to be able to buy records, but buying records is now a way to hear all the world's music like collecting cars is a way to see more of the solar system.

So now I work at Spotify as a zookeeper for playlist-making robots. Recommendation robots have existed for a while now, but people have mostly used them for shopping. Go find me things I might want to buy. "You bought a snorkel, maybe you'd like to buy these other snorkels?"

But what streaming music makes possible, which online music stores did not, is actual programmed music experiences. Instead of trying to sell you more snorkels, these robots can take you out to swim around with the funny-looking fish.

And as robots begin to craft your actual listening experience, it is reasonable, and maybe even morally imperative, to ask if a playlist robot can have an authorial voice, and, if so, what it is?

The answer is: No. Robots have no taste, no agenda, no soul, no self. Moreover, there is no robot. I talk about robots because it's funny and gives you something you can picture, but that's not how anything really happens.

How everything really happens is this: people listen to songs. Different people listen to different songs, and we count which ones, and then try to use computers to do math to find patterns in these numbers. That's what my job actually involves. I go to work, I sit down at my desk (except I actually stand at my fancy Spotify standing desk, because I heard that sitting will kill you and if you die you miss a lot of new releases), and I type computer programs that count the actions of human listeners and do math and produce lists of songs.

So when anybody talks about a fight between machines and humans in music recommendation, you should know that those people do not know what the fuck they are talking about. Music recommendations are machines "versus" humans in the same way that omelets are spatulas "versus" eggs.

So the good news is that you can stop worrying that robots are trying to poison your listening. But the bad news is that you can start worrying about food safety and whether the people operating your spatulas have the faintest idea what food is supposed to taste like.

Because data makes some amazing things possible, but it also makes terrible, incoherent, counter-productive things possible. And I'm going to tell you about some of them.

Counting is the most basic kind of math, and yet even just counting things usefully, in music streaming, is harder than you probably think. For example, this is the most streamed track by the most streamed artist on Spotify:

Various Artists "Kelly Clarkson on Annie Lennox"

Do you recognize the band? They are called "Various Artists", and that is their song "Kelly Clarkson on Annie Lennox", from their album Women in Music - 2015 Stories.

But OK, that's obviously not what we meant. We just need to exclude short commentary tracks, and then this is the most streamed music track by the most streamed artist on Spotify:

Various Artists "El Preso"

Except that's "Various Artists" again. The most streamed music track by an actual artist on Spotify is:

Rihanna "Work"

OK, so that's starting to make some sense. Pretty much all exercises in programmatic music discovery begin with this: can you "discover" Rihanna?

Spotify just launched in Indonesia, and I happen to know that Indonesian music is awesome, because there are people there and they make music, so let's find out what the most popular Indonesian song is.

Justin Bieber "Love Yourself"

I kind of wanted to know what the most popular Indonesian song is, not just the song that is most popular in Indonesia. But if I restrict my query to artists whose country of origin is Indonesia, I get this:

Isyana Sarasvati "Kau Adalah"

Which seems like it might be the Indonesian Lisa Loeb. It's by Isyana Sarasvati, and I looked her up, and she is Indonesian! She's 23, and her Wikipedia page discusses the scholarship she got from the government of Singapore to study music at an academy there, and lists her solo recitals.

It turns out that our data about where artists are from is decent where we have it, but a lot of times we just don't. 34 of the top 100 songs in Indonesia are by artists for whom we don't have locations.

But remember math? Math is cool. In addition to counting listeners in Indonesia, we can compare the listening in Indonesia to the listening in the rest of the world, and find the songs are that most distinctively popular in Indonesia. That gets us to this:

TheOvertunes "Cinta Adalah"

That is The Overtunes, who turn out to be a band of three Indonesian brothers who became famous when one of them won X Factor Indonesia in 2013.

But that's still not really what I wanted. It's like being curious about Indonesian food and buying a bag of Indonesian supermarket-brand potato chips.

I kind of wanted to hear some, I dunno, Indonesian Indie music. I assume they have some, because they have people, and they have X Factor, and that's bound piss some people off enough to start their own bands.

So if we switch from just counting to doing a bit more data analysis -- actually, quite a lot of data analysis -- we can discover that yes, there is an indie scene in Indonesia, and we can computationally model which bands are more or less a part of it, and without ever stepping foot in Indonesia, we can produce an algorithmic introduction to The Sound of Indonesian Indie, and it begins with this:

Sheila on 7 "Dan..."

That is Shelia on 7 doing "Dan...", and I looked them up, too. Rolling Stone Indonesia said that their debut album was one of the 150 Greatest Indonesian Albums of All Time, and they are the first band to sell more than 1m copies of each of their first 3 albums in Indonesia alone.

Of course, they're also on Sony Music Indonesia, and I assume that at least some of those millions of people who bought their first 3 albums, before Spotify launched in Indonesia and destroyed the album-sales market, are still alive and still remember them. One of the hard parts about running a global music service from your headquarters in Stockholm and your music-intelligence outpost in Boston, is that you need to be able to find Indonesian music that people who already know about Indonesian music don't already know about.

But once you've modeled the locally-unsurprising canonical core of Indonesian Indie music, you can use that to find people who spend unusually large blocks of their listening time listening to canonical Indonesian Indie music (most of whom are in Indonesia, but they don't have to be; some of them might be off at a music academy in Singapore, where Spotify has been available since 2013), and then you can calculate what music is most distinctively popular among serious Indonesian Indie fans, even if you have no data to tell you where it comes from. And that gets us things like this:

Sisitipsi "Alkohol"

That is "Alkohol" by Sistipsi. A Google search for that song finds only 8400 results, which appear to all be in Indonesian. Their band home page is a wordpress.com site, and they had 263 global Spotify listeners last month.

PILOTZ "Memang Aku"

PILOTZ, with a Z. Also from Indonesia! 117 listeners.

Hellcrust "Janji Api"

Hellcrust. 44 listeners last month. I looked them up, and yes, they're from Jakarta.

199x "Goodest Riddance"

199x. 14 monthly listeners! Also, maybe actually from Malaysia, not Indonesia, but in music recommending it's almost as impressive if you can be a little bit wrong as it is if you can be right, because usually when you're wrong you'll get Polish folk-techno or metalcore with Harry Potter fanfic lyrics.

So that's what a lot of my days are like. Pose a question, write some code, find some songs, and then try to figure out whether those songs are even vaguely answering the question or not.

And if the question is about Indonesia, that method kind of works.

But we also have 100 million listeners on Spotify, and we would like to be able to produce personalized listening experiences for each of them. Actually, we'd like to be able to produce multiple listening experiences for each of them. And we can't hire all of our listeners to work for us full-time curating their own individual personal music experiences, because apparently the business model doesn't work? So it's computers or nothing.

People, it turns out, are somewhat harder than countries.

For starters, here is the track I have played the most on Spotify:

Jewel "Twinkle, Twinkle Little Star"

As humans, we might guess that it is not quite accurate to say that that is my favorite song, and we might have a very specific theory about why that is. As humans, we might guess that the number of times I have played the song after that has a different meaning:

CHVRCHES "Leave a Trace"

In the latter case, I love CHVRCHES so much. But in the former case, I love my daughter even more than I love CHVRCHES, and some nights she really needs to hear Jewel sing "Twinkle Twinkle Little Star" at bedtime.

And if we are still in the early days of algorithmically programmed listening experiences, at all, then we're in what I hope we will look back on as the early- to mid- prehistory of algorithmic personalized listening experiences. I can't tell you exactly how they work, because we're still trying to make them work. But I can tell you 7 things I've learned that I think are principles to guide us towards a future in which dumbfoundingly amazing music you could never find on your own just flows out of the invisible sea of information directly into your ears. When you want it to, I don't mean you can't shut it off.

1. No music listener is ever only one thing.

I mean, you can't assume they are. I have a coworker named Matt who basically only listens to skate-punk music, ever, and we test all personalization things on him first, because you can tell immediately if it's wrong. Right: Warzone "Rebels Til We Die". Wrong: The Damned "Wounded Wolf - Original Mix". But other than him, almost everybody turns out to have some non-obvious combination of tastes. I listen to beepy electronica (Red Cell "Vial of Dreams") and gentle soothing Dark Funeral "Where Shadows Forever Reign" and Kangding Ray "Ardent", and sentimental Southern European arena pop (Gianluca Corrao "Amanti d'estate"), and if you just average that all together it turns out you mostly end up with mopey indie music that I don't like at all: Wyvern Lingo "Beast at the Door"

2. All information is partial.

We know what you play on Spotify, but we don't know what you listen to on the radio in the car, or what your spouse plays in your house while you're making dinner, or what you loved as a kid or even what you played incessantly on Rdio before it went bankrupt. For example, this is one of my favorite new albums this year: Magnum "Sacred Blood 'Divine' Lies". I adore Magnum, but I hadn't played them on Spotify at all. But my robot knew they were similar to other things it knew I liked. Sometimes music "discovery" is not about discovering things that you don't know, it's about the computer inferring aspects of your taste that you had previously hidden from it.

3. Variety is good.

It is in the interest of listeners and Spotify and music makers if people listen to more and more varied music. If all anybody wanted to hear was this once a day -- Adele "Hello" -- there would be no music business and no streaming and no joy or sunlight. Part of my job is to crack open the shell of the sky. Terabrite "Hello". If you are excited to hear what happens next, you will be more likely to pay us $10, and we will pay the artists more for the music you play, and they will make more of it instead of getting terrible day-jobs working for inbound marketing companies, and the world will be a better place.

4. People like discovering new music.

They may hate the song you want them to love. They may have a limited tolerance for doing work to discover music, or for trial-and-erroring through lots of music they don't like in order to find it, but neither of those things mean that they wouldn't be thrilled by the right new song if somebody could find it for them. One of you will come up after this to ask me what this song is: Sweden "Stocholm". One of you, probably a different person, will wonder about this: Draper/Prides "Break Over You". I have like a million of those. I mean actually like an actual million of those.

5. Bernie Sanders is right.

It is in the interest of the world of music creators if the streaming music business exerts a bit of democratic-socialist pressure against income inequality. The incremental human value of another person listening to "Shake It Off" again is arguably positive, but it's probably also considerably smaller than the value of that person listening to a new song by a new songwriter who doesn't already have enough money to live out the rest of their life inside a Manhattan loft whose walls are covered with thumbdrives full of bitcoins and #1-fan selfies. Anthem Lights "Shake It Off". Taylor, if you're listening, I'm going to keep playing shitty covers of your songs until you put the real ones back on Spotify. That's how it works.

6. If you're going to try to play people what they actually like, you have to be prepared for whatever that is.

DJ Loppetiss "Janteloven 2016"

That's "Russelåter", which is a crazy Norwegian thing where high school kids finish their exams way before the end of the senior year, so in the spring they get together in little gangs, give themselves goofy gang names, purchase actual tour buses from the previous year's gangs, have them repainted with their gang logo, commission terrible crap-EDM gang theme songs from Norwegian producers for whom this is the most profitable local music market, and then spend weeks driving around the suburbs of Oslo in these buses, drinking and never changing their clothes and blasting their appalling theme songs. I did not make this up.

7. Recommendation incurs responsibility.

If people are going to give up minutes of their finite lives to listen to something they would otherwise never have been burdened with, it better have the potential, however vague or elusive, to change their life. You can't, however tantalizing the prospect might seem, just play something because you want to. (Aedliga "Futility Has Its Limits") Like I said, you definitely can't do that. If you do that, the robots win.

Thank you.

I used to take software jobs to be able to buy records, but buying records is now a way to hear all the world's music like collecting cars is a way to see more of the solar system.

So now I work at Spotify as a zookeeper for playlist-making robots. Recommendation robots have existed for a while now, but people have mostly used them for shopping. Go find me things I might want to buy. "You bought a snorkel, maybe you'd like to buy these other snorkels?"

But what streaming music makes possible, which online music stores did not, is actual programmed music experiences. Instead of trying to sell you more snorkels, these robots can take you out to swim around with the funny-looking fish.

And as robots begin to craft your actual listening experience, it is reasonable, and maybe even morally imperative, to ask if a playlist robot can have an authorial voice, and, if so, what it is?

The answer is: No. Robots have no taste, no agenda, no soul, no self. Moreover, there is no robot. I talk about robots because it's funny and gives you something you can picture, but that's not how anything really happens.

How everything really happens is this: people listen to songs. Different people listen to different songs, and we count which ones, and then try to use computers to do math to find patterns in these numbers. That's what my job actually involves. I go to work, I sit down at my desk (except I actually stand at my fancy Spotify standing desk, because I heard that sitting will kill you and if you die you miss a lot of new releases), and I type computer programs that count the actions of human listeners and do math and produce lists of songs.

So when anybody talks about a fight between machines and humans in music recommendation, you should know that those people do not know what the fuck they are talking about. Music recommendations are machines "versus" humans in the same way that omelets are spatulas "versus" eggs.

So the good news is that you can stop worrying that robots are trying to poison your listening. But the bad news is that you can start worrying about food safety and whether the people operating your spatulas have the faintest idea what food is supposed to taste like.

Because data makes some amazing things possible, but it also makes terrible, incoherent, counter-productive things possible. And I'm going to tell you about some of them.

Counting is the most basic kind of math, and yet even just counting things usefully, in music streaming, is harder than you probably think. For example, this is the most streamed track by the most streamed artist on Spotify:

Various Artists "Kelly Clarkson on Annie Lennox"

Do you recognize the band? They are called "Various Artists", and that is their song "Kelly Clarkson on Annie Lennox", from their album Women in Music - 2015 Stories.

But OK, that's obviously not what we meant. We just need to exclude short commentary tracks, and then this is the most streamed music track by the most streamed artist on Spotify:

Various Artists "El Preso"

Except that's "Various Artists" again. The most streamed music track by an actual artist on Spotify is:

Rihanna "Work"

OK, so that's starting to make some sense. Pretty much all exercises in programmatic music discovery begin with this: can you "discover" Rihanna?

Spotify just launched in Indonesia, and I happen to know that Indonesian music is awesome, because there are people there and they make music, so let's find out what the most popular Indonesian song is.

Justin Bieber "Love Yourself"

I kind of wanted to know what the most popular Indonesian song is, not just the song that is most popular in Indonesia. But if I restrict my query to artists whose country of origin is Indonesia, I get this:

Isyana Sarasvati "Kau Adalah"

Which seems like it might be the Indonesian Lisa Loeb. It's by Isyana Sarasvati, and I looked her up, and she is Indonesian! She's 23, and her Wikipedia page discusses the scholarship she got from the government of Singapore to study music at an academy there, and lists her solo recitals.

It turns out that our data about where artists are from is decent where we have it, but a lot of times we just don't. 34 of the top 100 songs in Indonesia are by artists for whom we don't have locations.

But remember math? Math is cool. In addition to counting listeners in Indonesia, we can compare the listening in Indonesia to the listening in the rest of the world, and find the songs are that most distinctively popular in Indonesia. That gets us to this:

TheOvertunes "Cinta Adalah"

That is The Overtunes, who turn out to be a band of three Indonesian brothers who became famous when one of them won X Factor Indonesia in 2013.

But that's still not really what I wanted. It's like being curious about Indonesian food and buying a bag of Indonesian supermarket-brand potato chips.

I kind of wanted to hear some, I dunno, Indonesian Indie music. I assume they have some, because they have people, and they have X Factor, and that's bound piss some people off enough to start their own bands.

So if we switch from just counting to doing a bit more data analysis -- actually, quite a lot of data analysis -- we can discover that yes, there is an indie scene in Indonesia, and we can computationally model which bands are more or less a part of it, and without ever stepping foot in Indonesia, we can produce an algorithmic introduction to The Sound of Indonesian Indie, and it begins with this:

Sheila on 7 "Dan..."

That is Shelia on 7 doing "Dan...", and I looked them up, too. Rolling Stone Indonesia said that their debut album was one of the 150 Greatest Indonesian Albums of All Time, and they are the first band to sell more than 1m copies of each of their first 3 albums in Indonesia alone.

Of course, they're also on Sony Music Indonesia, and I assume that at least some of those millions of people who bought their first 3 albums, before Spotify launched in Indonesia and destroyed the album-sales market, are still alive and still remember them. One of the hard parts about running a global music service from your headquarters in Stockholm and your music-intelligence outpost in Boston, is that you need to be able to find Indonesian music that people who already know about Indonesian music don't already know about.

But once you've modeled the locally-unsurprising canonical core of Indonesian Indie music, you can use that to find people who spend unusually large blocks of their listening time listening to canonical Indonesian Indie music (most of whom are in Indonesia, but they don't have to be; some of them might be off at a music academy in Singapore, where Spotify has been available since 2013), and then you can calculate what music is most distinctively popular among serious Indonesian Indie fans, even if you have no data to tell you where it comes from. And that gets us things like this:

Sisitipsi "Alkohol"

That is "Alkohol" by Sistipsi. A Google search for that song finds only 8400 results, which appear to all be in Indonesian. Their band home page is a wordpress.com site, and they had 263 global Spotify listeners last month.

PILOTZ "Memang Aku"

PILOTZ, with a Z. Also from Indonesia! 117 listeners.

Hellcrust "Janji Api"

Hellcrust. 44 listeners last month. I looked them up, and yes, they're from Jakarta.

199x "Goodest Riddance"

199x. 14 monthly listeners! Also, maybe actually from Malaysia, not Indonesia, but in music recommending it's almost as impressive if you can be a little bit wrong as it is if you can be right, because usually when you're wrong you'll get Polish folk-techno or metalcore with Harry Potter fanfic lyrics.

So that's what a lot of my days are like. Pose a question, write some code, find some songs, and then try to figure out whether those songs are even vaguely answering the question or not.

And if the question is about Indonesia, that method kind of works.

But we also have 100 million listeners on Spotify, and we would like to be able to produce personalized listening experiences for each of them. Actually, we'd like to be able to produce multiple listening experiences for each of them. And we can't hire all of our listeners to work for us full-time curating their own individual personal music experiences, because apparently the business model doesn't work? So it's computers or nothing.

People, it turns out, are somewhat harder than countries.

For starters, here is the track I have played the most on Spotify:

Jewel "Twinkle, Twinkle Little Star"

As humans, we might guess that it is not quite accurate to say that that is my favorite song, and we might have a very specific theory about why that is. As humans, we might guess that the number of times I have played the song after that has a different meaning:

CHVRCHES "Leave a Trace"

In the latter case, I love CHVRCHES so much. But in the former case, I love my daughter even more than I love CHVRCHES, and some nights she really needs to hear Jewel sing "Twinkle Twinkle Little Star" at bedtime.

And if we are still in the early days of algorithmically programmed listening experiences, at all, then we're in what I hope we will look back on as the early- to mid- prehistory of algorithmic personalized listening experiences. I can't tell you exactly how they work, because we're still trying to make them work. But I can tell you 7 things I've learned that I think are principles to guide us towards a future in which dumbfoundingly amazing music you could never find on your own just flows out of the invisible sea of information directly into your ears. When you want it to, I don't mean you can't shut it off.

1. No music listener is ever only one thing.

I mean, you can't assume they are. I have a coworker named Matt who basically only listens to skate-punk music, ever, and we test all personalization things on him first, because you can tell immediately if it's wrong. Right: Warzone "Rebels Til We Die". Wrong: The Damned "Wounded Wolf - Original Mix". But other than him, almost everybody turns out to have some non-obvious combination of tastes. I listen to beepy electronica (Red Cell "Vial of Dreams") and gentle soothing Dark Funeral "Where Shadows Forever Reign" and Kangding Ray "Ardent", and sentimental Southern European arena pop (Gianluca Corrao "Amanti d'estate"), and if you just average that all together it turns out you mostly end up with mopey indie music that I don't like at all: Wyvern Lingo "Beast at the Door"

2. All information is partial.

We know what you play on Spotify, but we don't know what you listen to on the radio in the car, or what your spouse plays in your house while you're making dinner, or what you loved as a kid or even what you played incessantly on Rdio before it went bankrupt. For example, this is one of my favorite new albums this year: Magnum "Sacred Blood 'Divine' Lies". I adore Magnum, but I hadn't played them on Spotify at all. But my robot knew they were similar to other things it knew I liked. Sometimes music "discovery" is not about discovering things that you don't know, it's about the computer inferring aspects of your taste that you had previously hidden from it.

3. Variety is good.

It is in the interest of listeners and Spotify and music makers if people listen to more and more varied music. If all anybody wanted to hear was this once a day -- Adele "Hello" -- there would be no music business and no streaming and no joy or sunlight. Part of my job is to crack open the shell of the sky. Terabrite "Hello". If you are excited to hear what happens next, you will be more likely to pay us $10, and we will pay the artists more for the music you play, and they will make more of it instead of getting terrible day-jobs working for inbound marketing companies, and the world will be a better place.

4. People like discovering new music.

They may hate the song you want them to love. They may have a limited tolerance for doing work to discover music, or for trial-and-erroring through lots of music they don't like in order to find it, but neither of those things mean that they wouldn't be thrilled by the right new song if somebody could find it for them. One of you will come up after this to ask me what this song is: Sweden "Stocholm". One of you, probably a different person, will wonder about this: Draper/Prides "Break Over You". I have like a million of those. I mean actually like an actual million of those.

5. Bernie Sanders is right.

It is in the interest of the world of music creators if the streaming music business exerts a bit of democratic-socialist pressure against income inequality. The incremental human value of another person listening to "Shake It Off" again is arguably positive, but it's probably also considerably smaller than the value of that person listening to a new song by a new songwriter who doesn't already have enough money to live out the rest of their life inside a Manhattan loft whose walls are covered with thumbdrives full of bitcoins and #1-fan selfies. Anthem Lights "Shake It Off". Taylor, if you're listening, I'm going to keep playing shitty covers of your songs until you put the real ones back on Spotify. That's how it works.

6. If you're going to try to play people what they actually like, you have to be prepared for whatever that is.

DJ Loppetiss "Janteloven 2016"

That's "Russelåter", which is a crazy Norwegian thing where high school kids finish their exams way before the end of the senior year, so in the spring they get together in little gangs, give themselves goofy gang names, purchase actual tour buses from the previous year's gangs, have them repainted with their gang logo, commission terrible crap-EDM gang theme songs from Norwegian producers for whom this is the most profitable local music market, and then spend weeks driving around the suburbs of Oslo in these buses, drinking and never changing their clothes and blasting their appalling theme songs. I did not make this up.

7. Recommendation incurs responsibility.

If people are going to give up minutes of their finite lives to listen to something they would otherwise never have been burdened with, it better have the potential, however vague or elusive, to change their life. You can't, however tantalizing the prospect might seem, just play something because you want to. (Aedliga "Futility Has Its Limits") Like I said, you definitely can't do that. If you do that, the robots win.

Thank you.

¶ The Satan:Noise Ratio · 19 April 2015 essay/listen/tech

Through a roundabout series of connections, I got invited to be part of a roundtable panel at EMP Pop 2015, which ended up (in keeping with this year's themes of Music, Weirdness and Transgression) being a group deliberation on the subject of The Worst Song in the World.

And since I was going to be there, and conference rules allowed for solo proposals in addition to the group thing, I figured I might as well also try something fun and weird and outside of my usual current data-alchemical domain.

In the end the thing ended up being not quite free of data-alchemy in the same way that my songs without drums always somehow develop drum tracks. But it's not about data alchemy. At least mostly not.

All the talks are supposed to eventually be available in audio form, but in the meantime, here is the script I was more or less working from. To reproduce the auditorium experience you should blast at least the first 20 seconds or so of each song as you encounter it in the text, and imagine me intoning the names of the songs in monster-truck-rally announcer-voice, and then saying everything else really fast and excitedly because a) you only get 20 minutes, and b) it was 9:20am on the Sunday morning after the Saturday night conference party and some people might need a little help relocating their attentiveness.

(Also, be forewarned that neither the talk nor the music discussed is intended for underage audiences or people who are insecure about religion or genuinely frightened by grown men growling like monsters.)

The Satan:Noise Ratio

or

Triangulations of the Abyss

I grew up in what I wouldn't call a religious community, exactly, but certainly one that was dominated by the assumption of Christianity. My social status was kind of established when I told two members of the football team that the universe was formed out of dust, not Godliness, and it really didn't make any difference whether you liked that idea or not. This was second grade. We had a football team in second grade.

By the time I discovered heavy metal, I was pretty ready for some kind of comprehensive alternative. Science fiction, existentialism, atheism, algebra, Black Sabbath. These all seemed to frighten people, which suggested they were good and powerful ingredients. But if you're going to fight against football in Texas, you have to have your shit organized. You need a program.

Obviously as an atheist I wasn't going to believe in Satan any more than I was going to believe in elves, but the idea of Satanism seemed potentially compelling anyway. Like Scientology, but with roots, and better iconography, and fewer videotapes to buy. And I had learned a lot from reading the liner notes to Rush albums, so I dug into Black Sabbath albums with the same enthusiasm.

Black Sabbath "After Forever"

[You have to remember that at the time, that was really heavy. But the words go like this:]

Black Sabbath "Heaven & Hell"

But OK, what about Judas Priest. Didn't two guys kill themselves after listening to Judas Priest? Now we're getting serious.

Judas Priest "Saints in Hell"

But whatever. Before I found the Satanism I was looking for, New Wave happened, and it turned out that androgyny and drum machines scared the football boys way more than Satan.

And then I left Texas and went to Harvard and took on a very different set of social challenges. So the next time I cycled back into metal, as I always do no matter how many other things I'm into, I wasn't looking for more elaborate pentagrams to shock football boys, I was looking for more hermeneutic nuances to situate and contextualize metal for comparative-lit majors who listened to the Minutemen and the Talking Heads.

Slayer. The Antichrist. Fucking yes. Slayer makes Sabbath with Ozzy sound like Wings, and Sabbath with Dio sound like Van Halen with Sammy Hagar.

Slayer "The Antichrist"

But what about Bathory? In Nomine Satanas. Fucking Latin! Or something...

Bathory "In Nomine Satanas"

Emperor. These are Norwegian actual church-burning dudes. Although, it's Scandinavia, so the church-burning was actually part of a progressive urban planning scheme with multi-use pentagrams in pleasant, radiant-heated public spaces.

Emperor "Inno a Satana"

Gorgoroth "Possessed by Satan"

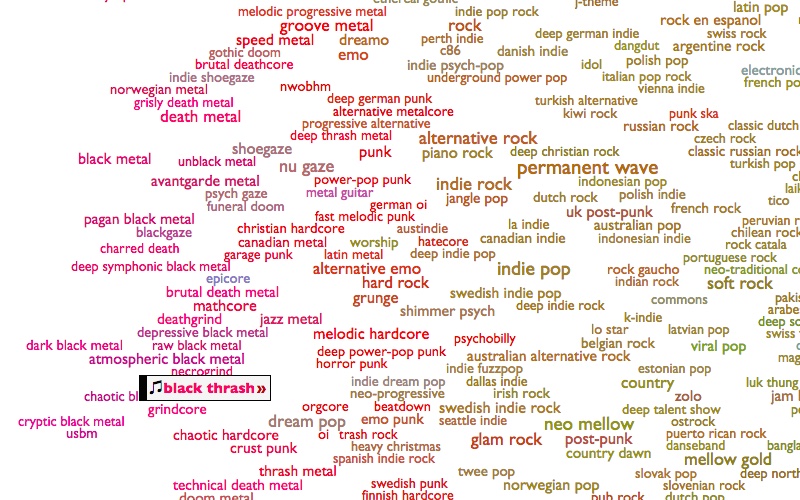

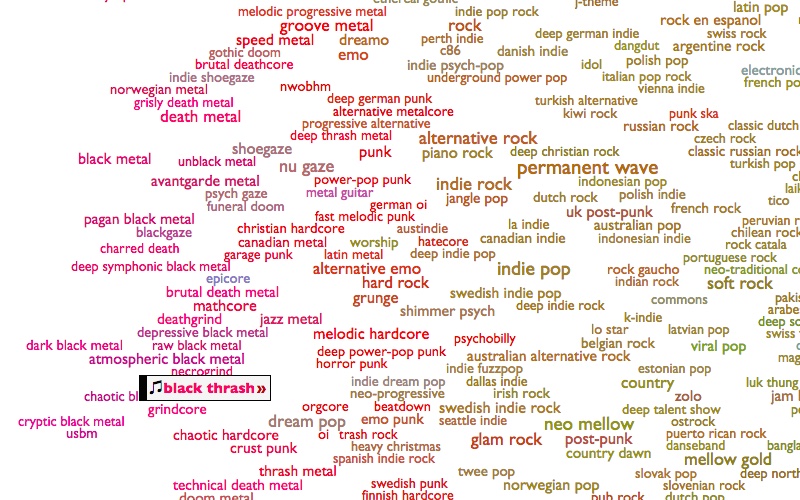

And maybe what we fear guides our evasions so inexorably that we always end up confirming our suspicions by our nature, but my love of metal motivated and informed my work designing data-analysis software as much as it haunted my attempts to understand emotional resonance, and gradually over the years my writing about music for people bled into writing about music for computers, and that's how I eventually ended up at Spotify, where we have a lot of computers and the largest mass of data about music that humanity has ever collected. And this makes it possible to find out about a lot of metal that you might not otherwise know about. A lot. And a lot of everything else. So I ended up making this genre map, to try to make some sense of it all.

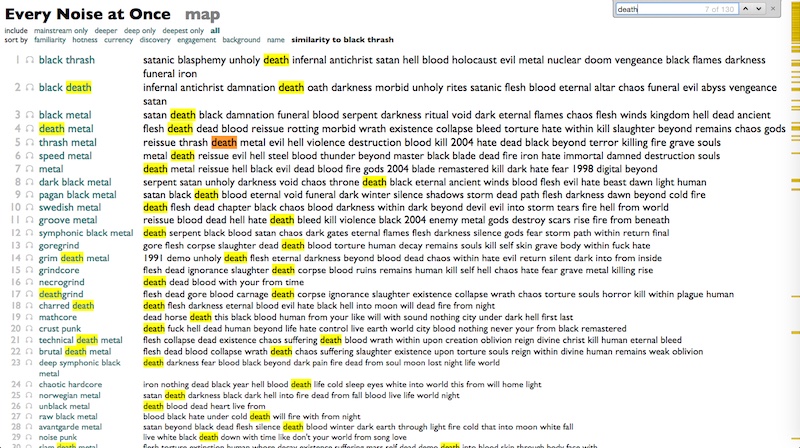

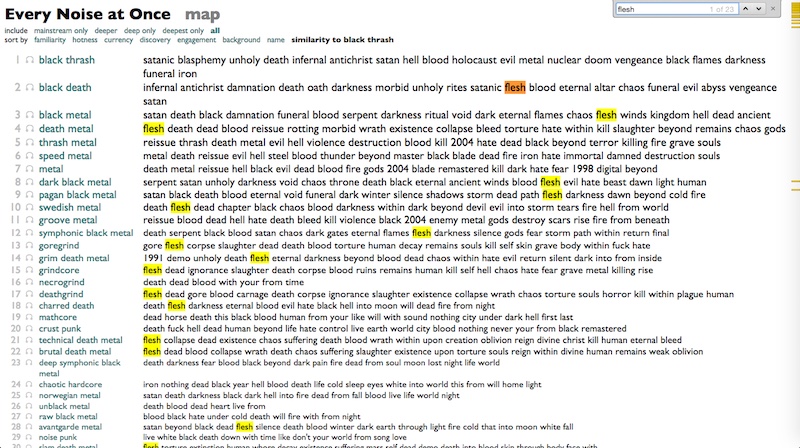

And having organized the world into 1375 genres (which is approximately 666 times 2), I can now answer some other questions about them. Just a few days ago, in fact, purely coincidentally and in no way because I was writing this talk at the last minute without a really clear idea where I was going with it, I decided to reverse-index all the words in the titles of all the songs in the world, and then, using BLACK MATH, find and rank the words that appear most disproportionately in each genre.

It wasn't totally obvious whether this would produce a magic quantification of scattered souls, or a polite visit from some Mumford-and-Sons fans in the IT department, but here are some examples of what it produced in a few genres you might know:

a cappella: medley love somebody your girl home time over will with when need around life what tonight song that don't just

acoustic blues: blues woman boogie baby mama moan down mississippi gonna ain't going worried chicago shake long don't rider jail poor woogie

modern country rock: country beer that's that whiskey love good like cowboy truck don't she's carolina back ain't just wanna this with dirt

east coast hip hop: featuring edited kool explicit rhyme triple hood shit album game check ghetto what streets money flow version that style

west coast rap: gangsta dogg featuring niggaz nate snoop hood ghetto playa money pimp thang shit smoke game bitch life funk ain't west

I'd say that shit is doing something. [The whole thing is here.]

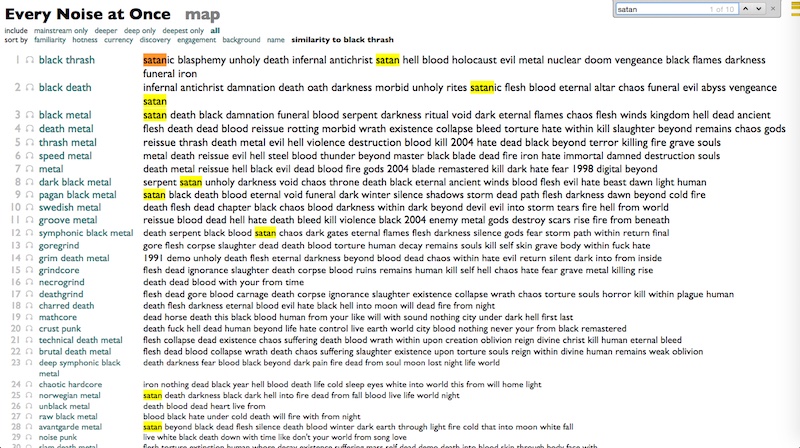

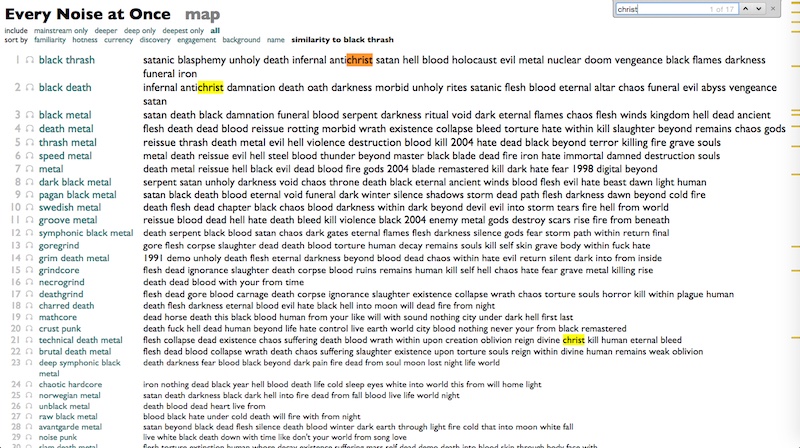

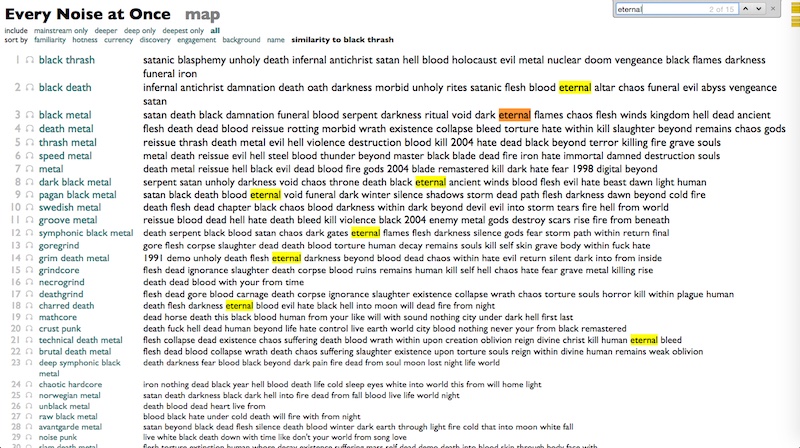

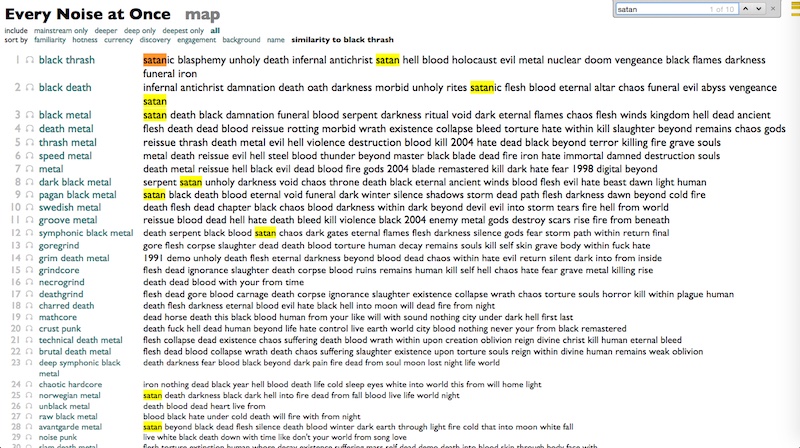

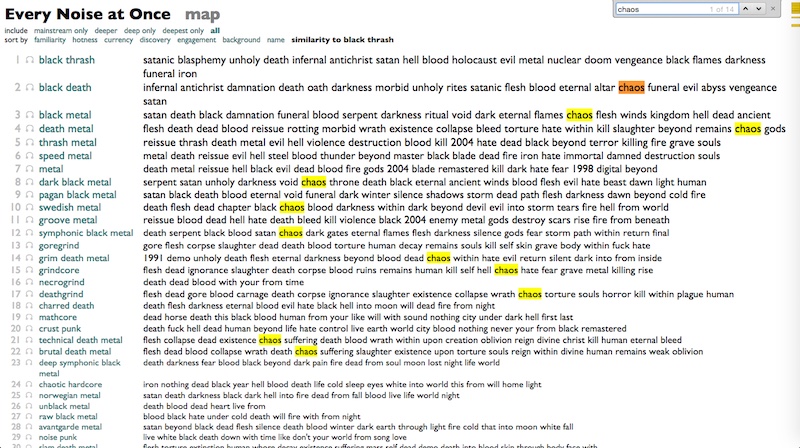

Using this, I can finally figure out the most Satanic of all metal subgenres. It is Black Thrash, whose top words go like this:

satanic blasphemy unholy death infernal antichrist satan hell blood holocaust evil metal nuclear doom vengeance black flames darkness funeral iron

If Satanism is fucking anywhere, it is here.

Nifelheim "Envoy of Lucifer"

OK, no idea what they're saying there.

Destroyer 666 "Satanic Speed Metal"

Um.

Warhammer "The Claw of Religion"

Sathanas "Reign of the Antichrist"

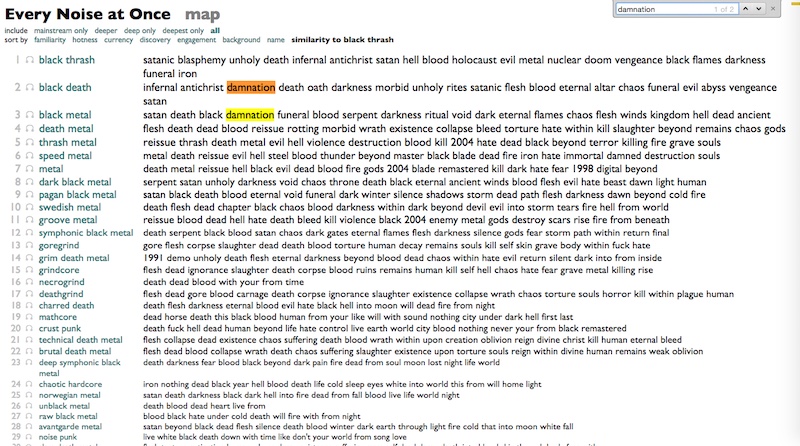

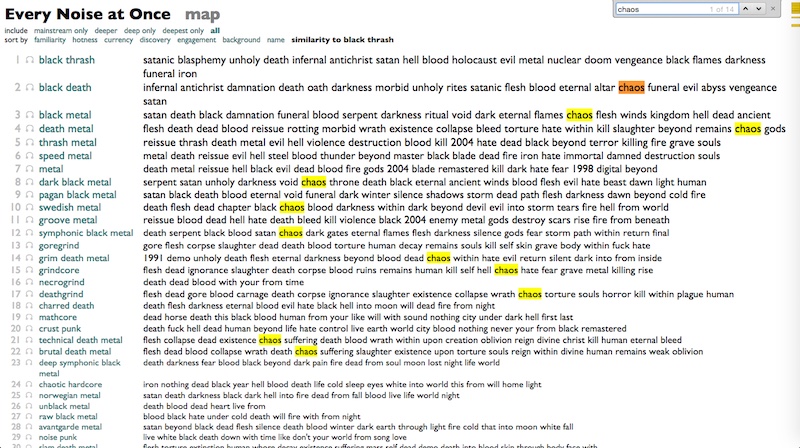

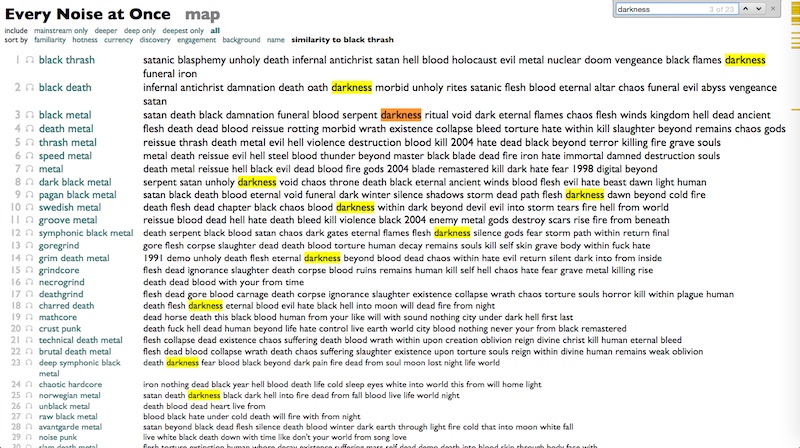

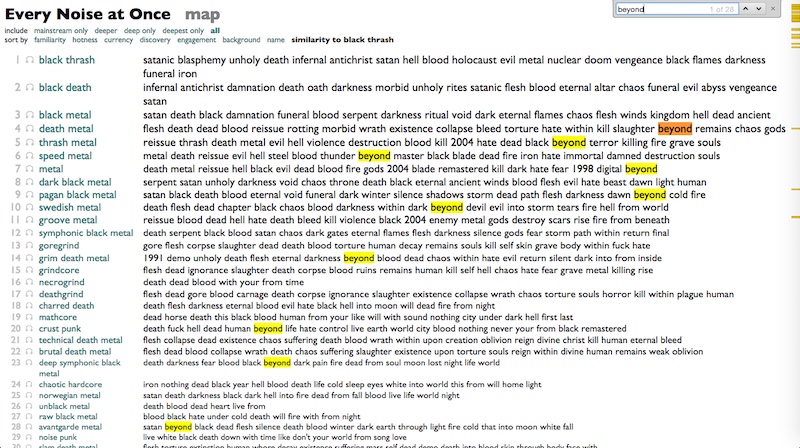

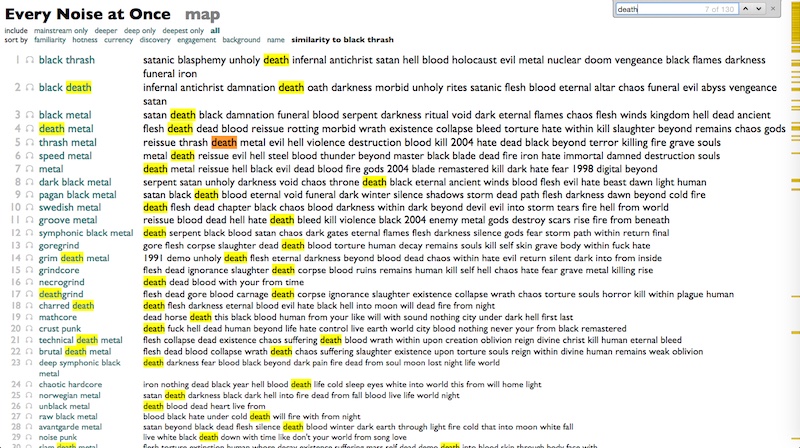

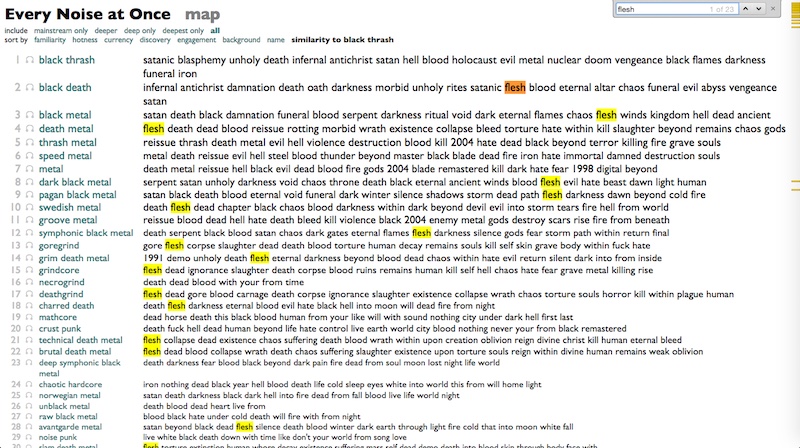

However, I have a lot of other metal subgenres to work with, and I can actually reorganize the world as if Black Thrash were its point of origin, and then as we move slowly away from that point, genre by genre, we can start to see the patterns change.

"Satan" begins to disappear.

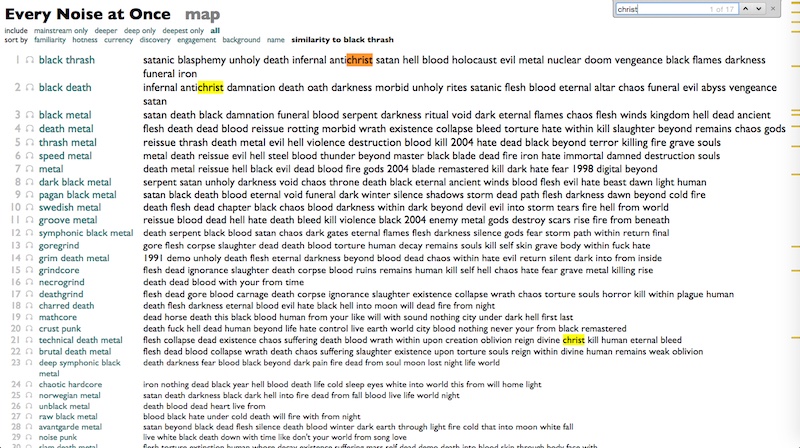

"Christ" goes away.

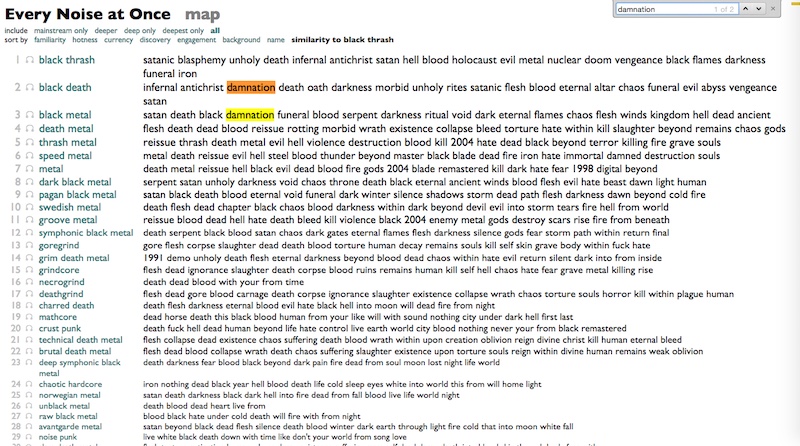

"Damnation" no longer so much of a concern.

"Chaos" starts to appear.

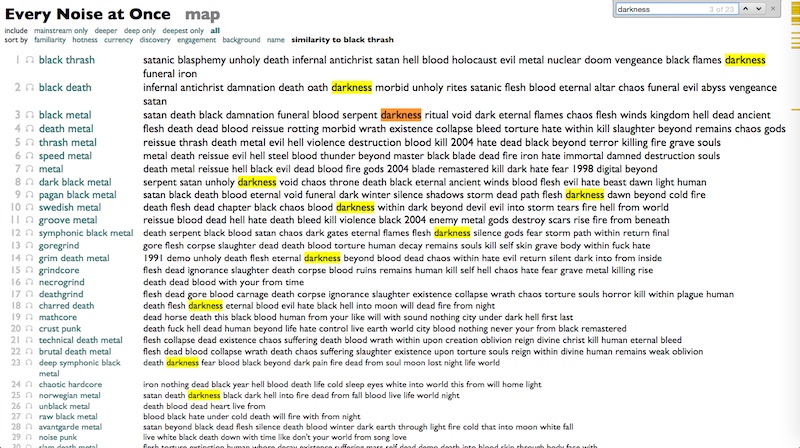

"Darkness" is everywhere.

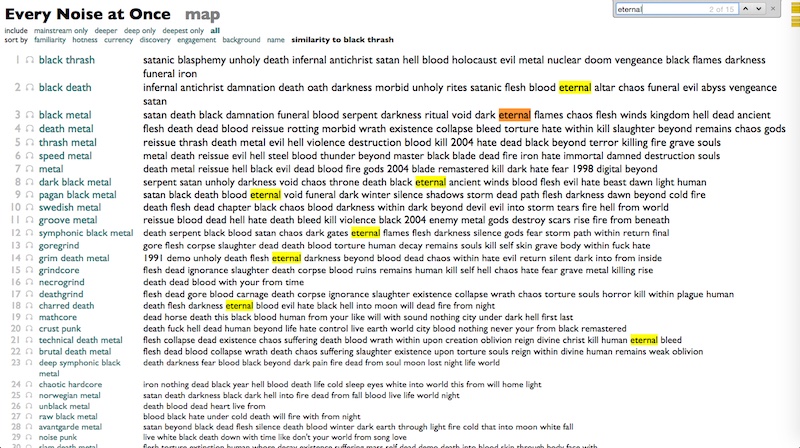

"Eternal" fascinates us.

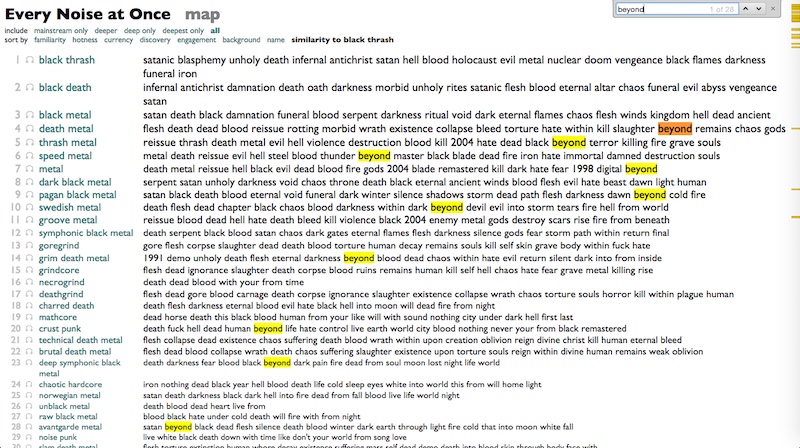

As does "Beyond".

"Death", always death.

And over and over, at the top of almost every list that doesn't start with "Death": "Flesh".

Except groove metal, where the number 1 term is "Reissue".

So my mistake, maybe, was in assuming I was looking for a philosophy that called itself Satanic. Give up that constraint, and ideas start to coalesce after all.

Entombed "Left Hand Path"

Celtic Frost "Os Abysmi Vel Daath"

OK, first of all, the band is called Totalselfhatred, and they sound like this. Dreamy.

Deathspell Omega "Chaining the Katechon"

That's a 22-minute song, and it does not fade in.

1. Babel. Acceptance of chaos, instead of a futile struggle for order or serenity

2. The Codex. To exist in chaos is to seek complexity over simplicity

3. The Void. There is beauty in darkness

4. The Scythe. There are either no illusions, or all illusions, but either way, only death is real

Which all adds up, I think, to something that I basically understood in second grade, after all: grimly acknowledged free will. That is the philosophical core of metal, as an art form. That is the exact rebellion I was seeking. To choose Satan, and particularly to choose Satan without giving him any positive qualities, is to assert that the act of choosing is more important than the actual choice. To choose death is to assert that choosing is more important than living. To choose death symbolically is somewhat more powerful than choosing it literally, because you can choose it symbolically more than once, while gives you a chance to refine your symbolism.

Blut Aus Nord "The Choir of the Dead"

That is Blut Aus Nord's "The Choir of the Dead", from an album actually called The Work Which Transforms God. What does it say? I dunno. But what does it mean? "Hail Satan" is "Think for yourself" plus noise.

Thank you, and see you in Hell.

[The whole playlist that I was playing from is on Spotify here: Triangulations of the Abyss.]

Thanks to the Program Committee and the audience for indulging this whim, and particularly to Eric Weisbard for backing up his early-morning scheduling of this racket by showing up to moderate the session himself.

And since I was going to be there, and conference rules allowed for solo proposals in addition to the group thing, I figured I might as well also try something fun and weird and outside of my usual current data-alchemical domain.

In the end the thing ended up being not quite free of data-alchemy in the same way that my songs without drums always somehow develop drum tracks. But it's not about data alchemy. At least mostly not.

All the talks are supposed to eventually be available in audio form, but in the meantime, here is the script I was more or less working from. To reproduce the auditorium experience you should blast at least the first 20 seconds or so of each song as you encounter it in the text, and imagine me intoning the names of the songs in monster-truck-rally announcer-voice, and then saying everything else really fast and excitedly because a) you only get 20 minutes, and b) it was 9:20am on the Sunday morning after the Saturday night conference party and some people might need a little help relocating their attentiveness.

(Also, be forewarned that neither the talk nor the music discussed is intended for underage audiences or people who are insecure about religion or genuinely frightened by grown men growling like monsters.)

The Satan:Noise Ratio

or

Triangulations of the Abyss

I grew up in what I wouldn't call a religious community, exactly, but certainly one that was dominated by the assumption of Christianity. My social status was kind of established when I told two members of the football team that the universe was formed out of dust, not Godliness, and it really didn't make any difference whether you liked that idea or not. This was second grade. We had a football team in second grade.

By the time I discovered heavy metal, I was pretty ready for some kind of comprehensive alternative. Science fiction, existentialism, atheism, algebra, Black Sabbath. These all seemed to frighten people, which suggested they were good and powerful ingredients. But if you're going to fight against football in Texas, you have to have your shit organized. You need a program.

Obviously as an atheist I wasn't going to believe in Satan any more than I was going to believe in elves, but the idea of Satanism seemed potentially compelling anyway. Like Scientology, but with roots, and better iconography, and fewer videotapes to buy. And I had learned a lot from reading the liner notes to Rush albums, so I dug into Black Sabbath albums with the same enthusiasm.

Black Sabbath "After Forever"

[You have to remember that at the time, that was really heavy. But the words go like this:]

I think it was true it was people like you that crucified ChristPuzzling. But then, as if realizing they were missing something, they got a new singer whose name was Dio, and made an album called Heaven & Hell.

I think it is sad the opinion you had was the only one voiced

Will you be so sure when your day is near, say you don't believe?

You had the chance but you turned it down, now you can't retrieve

Black Sabbath "Heaven & Hell"

Sing me a song, you're a singerThe music: solid. The lyrics? Not exactly "Red Barchetta".

Do me a wrong, you're a bringer of evil

The Devil is never a maker

The less that you give, you're a taker

So it's on and on and on, it's Heaven and Hell, oh well

…

Fool, fool! You've got to bleed for the dancer!

But OK, what about Judas Priest. Didn't two guys kill themselves after listening to Judas Priest? Now we're getting serious.

Judas Priest "Saints in Hell"

Cover your fistsOK, if I wanted a fucking rhyming "evil" version of Noah's Ark...

Razor your spears

It's been our possession

For 8,000 years

Fetch the scream eagles

Unleash the wild cats

Set loose the king cobras

And blood sucking bats

But whatever. Before I found the Satanism I was looking for, New Wave happened, and it turned out that androgyny and drum machines scared the football boys way more than Satan.

And then I left Texas and went to Harvard and took on a very different set of social challenges. So the next time I cycled back into metal, as I always do no matter how many other things I'm into, I wasn't looking for more elaborate pentagrams to shock football boys, I was looking for more hermeneutic nuances to situate and contextualize metal for comparative-lit majors who listened to the Minutemen and the Talking Heads.

Slayer. The Antichrist. Fucking yes. Slayer makes Sabbath with Ozzy sound like Wings, and Sabbath with Dio sound like Van Halen with Sammy Hagar.

Slayer "The Antichrist"

I am the AntichristSo, that's not Satanic, that's Christian. I mean, it's sort of ironic, Slayer of course were the original modern hipsters.

All love is lost

Insanity is what I am

Eternally my soul will rot (rot... rot)

But what about Bathory? In Nomine Satanas. Fucking Latin! Or something...

Bathory "In Nomine Satanas"

Ink the pen with bloodJesus fucking christ: more fealty.

Now sign your destiny to me

Emperor. These are Norwegian actual church-burning dudes. Although, it's Scandinavia, so the church-burning was actually part of a progressive urban planning scheme with multi-use pentagrams in pleasant, radiant-heated public spaces.

Emperor "Inno a Satana"

O' mighty Lord of the Night. Master of beasts. Bringer of awe and derision.Satan's uvula! "Harkee"?

Thou whose spirit lieth upon every act of oppression, hatred and strife.

Thou whose presence dwelleth in every shadow.

Thou who strengthen the power of every quietus.

Thou who sway every plague and storm.

Harkee.

Gorgoroth "Possessed by Satan"

worldwide revolution has occurredWe rape the nuns with desire? This is a program of sorts, I guess. But not one that offered solutions to any problems I actually had. But after a while, I kind of stopped asking music to solve any problems in my life that weren't about music. As an adult, the main thing I asked from my Satanic Norwegian metal was leads for where I could find more of it. The most constant internal theme in my life has been the desperate gnawing suspicion that all the music I know is only the tiniest sliver of what actually exists.

holy war, execution of sodomy

We are possessed by the moon

We are possessed by evil

We are possessed by Satan

possessed

possessed by satan

and then we rape the nuns with desire

And maybe what we fear guides our evasions so inexorably that we always end up confirming our suspicions by our nature, but my love of metal motivated and informed my work designing data-analysis software as much as it haunted my attempts to understand emotional resonance, and gradually over the years my writing about music for people bled into writing about music for computers, and that's how I eventually ended up at Spotify, where we have a lot of computers and the largest mass of data about music that humanity has ever collected. And this makes it possible to find out about a lot of metal that you might not otherwise know about. A lot. And a lot of everything else. So I ended up making this genre map, to try to make some sense of it all.

And having organized the world into 1375 genres (which is approximately 666 times 2), I can now answer some other questions about them. Just a few days ago, in fact, purely coincidentally and in no way because I was writing this talk at the last minute without a really clear idea where I was going with it, I decided to reverse-index all the words in the titles of all the songs in the world, and then, using BLACK MATH, find and rank the words that appear most disproportionately in each genre.

It wasn't totally obvious whether this would produce a magic quantification of scattered souls, or a polite visit from some Mumford-and-Sons fans in the IT department, but here are some examples of what it produced in a few genres you might know:

a cappella: medley love somebody your girl home time over will with when need around life what tonight song that don't just

acoustic blues: blues woman boogie baby mama moan down mississippi gonna ain't going worried chicago shake long don't rider jail poor woogie

modern country rock: country beer that's that whiskey love good like cowboy truck don't she's carolina back ain't just wanna this with dirt

east coast hip hop: featuring edited kool explicit rhyme triple hood shit album game check ghetto what streets money flow version that style

west coast rap: gangsta dogg featuring niggaz nate snoop hood ghetto playa money pimp thang shit smoke game bitch life funk ain't west

I'd say that shit is doing something. [The whole thing is here.]

Using this, I can finally figure out the most Satanic of all metal subgenres. It is Black Thrash, whose top words go like this:

satanic blasphemy unholy death infernal antichrist satan hell blood holocaust evil metal nuclear doom vengeance black flames darkness funeral iron

If Satanism is fucking anywhere, it is here.

Nifelheim "Envoy of Lucifer"

OK, no idea what they're saying there.

Destroyer 666 "Satanic Speed Metal"

Um.

Warhammer "The Claw of Religion"

Since the beginning of timeIsn't that actually the narration from the beginning of The Fifth Element?

A weapon was built and protected

To keep the balance in line

To guard the "forces of the light"

Do you hear the cries of all the ones that fell?

Sathanas "Reign of the Antichrist"

From the fall of grace-I shall rise againWell, it's certainly Satanic. But it's Satanism as mirror-image Christianity. Like, imagine if Jackson Pollock's avant-garde transgression was taking Vermeer paintings and repainting them with left and right reversed!!!! To be fair, that's the usual way in which revolutions collapse into politics, hating the status quo's conclusions but being unable to escape its assumptions.

Avenging chosen one-Known as Satan’s son

However, I have a lot of other metal subgenres to work with, and I can actually reorganize the world as if Black Thrash were its point of origin, and then as we move slowly away from that point, genre by genre, we can start to see the patterns change.

"Satan" begins to disappear.

"Christ" goes away.

"Damnation" no longer so much of a concern.

"Chaos" starts to appear.

"Darkness" is everywhere.

"Eternal" fascinates us.

As does "Beyond".

"Death", always death.

And over and over, at the top of almost every list that doesn't start with "Death": "Flesh".

Except groove metal, where the number 1 term is "Reissue".

So my mistake, maybe, was in assuming I was looking for a philosophy that called itself Satanic. Give up that constraint, and ideas start to coalesce after all.

Entombed "Left Hand Path"

No one will take my soul awayEnslaved "Ethica Odini"

I carry my own will and make my day

You have the key to mysteryDantalion "Onward to Darkness"

Pick up the runes; unveil and see

Existence is your own adversary,Mitochondrion "Eternal Contempt of Man"

a path full of pain and madness.

Now the earth, sea, and sky all have tornDodecahedron "I, Chronocrator"

Now a gate from the void hath been born

Both the watchers and the unholy do agree

Eradicate that vermin filth humanity

Reigning formulas undoneWe are approaching a version of Nihilism that is not an absence, but an embrace of nothingness, an embrace of the finite, of finity.

Oaths sworn into silence

Our world will be without form

Our earth will be void

Celtic Frost "Os Abysmi Vel Daath"

Where I am there is no thing.Totalselfhatred "Enlightenment"

No God, no me, no inbetween.

OK, first of all, the band is called Totalselfhatred, and they sound like this. Dreamy.

I cannot change your destiny, can only help you thinkAnd then, maybe, the grand masters of this, Deathspell Omega.

As far as my horizons lead - your thoughts will be more deep

Hope inside is torturing me - keeps painfully alive

A light inside, a knowledge deep, that shines so bright!

Deathspell Omega "Chaining the Katechon"

That's a 22-minute song, and it does not fade in.

The task to be achieved, human vocationHere, then, are some potential tenets of a chaotic black metal philosophical program:

Is to become intensely mortal

Not to shrink back

Before the voices

coming from the gallows tree

A work making increasing sense

By its lack of sense

In the history of times there is

But the truth of bones and dust.

1. Babel. Acceptance of chaos, instead of a futile struggle for order or serenity

2. The Codex. To exist in chaos is to seek complexity over simplicity

3. The Void. There is beauty in darkness

4. The Scythe. There are either no illusions, or all illusions, but either way, only death is real

Which all adds up, I think, to something that I basically understood in second grade, after all: grimly acknowledged free will. That is the philosophical core of metal, as an art form. That is the exact rebellion I was seeking. To choose Satan, and particularly to choose Satan without giving him any positive qualities, is to assert that the act of choosing is more important than the actual choice. To choose death is to assert that choosing is more important than living. To choose death symbolically is somewhat more powerful than choosing it literally, because you can choose it symbolically more than once, while gives you a chance to refine your symbolism.

Blut Aus Nord "The Choir of the Dead"

That is Blut Aus Nord's "The Choir of the Dead", from an album actually called The Work Which Transforms God. What does it say? I dunno. But what does it mean? "Hail Satan" is "Think for yourself" plus noise.

Thank you, and see you in Hell.

[The whole playlist that I was playing from is on Spotify here: Triangulations of the Abyss.]

Thanks to the Program Committee and the audience for indulging this whim, and particularly to Eric Weisbard for backing up his early-morning scheduling of this racket by showing up to moderate the session himself.

¶ Post-Neo-Traditional Pop Post-Thing · 29 September 2014 essay/listen/tech

As part of a conference on Music and Genre at McGill University in Montreal, over this past weekend, I served as the non-academic curiosity at the center of a round-table discussion about the nature of musical genres, and of the natures of efforts to understand genres, and of the natures of efforts to understand the efforts to understand genres. Plus or minus one or two levels of abstraction, I forget exactly.

My "talk" to open this conversation was not strictly scripted to begin with, and I ended up rewriting my oblique speaking notes more or less over from scratch as the day was going on, anyway. One section, which I added as I listened to other people talk about the kinds of distinctions that "genres" represent, attempted to list some of the kinds of genres I have in my deliberately multi-definitional genre map. There ended up being so many of these that I mentioned only a selection of them during the talk. So here, for extended (potential) amusement, is the whole list I had on my screen:

Kinds of Genres

(And note that this isn't even one kind of kind of genre...)

- conventional genre (jazz, reggae)

- subgenre (calypso, sega, samba, barbershop)

- region (malaysian pop, lithumania)

- language (rock en espanol, hip hop tuga, telugu, malayalam)

- historical distance (vintage swing, traditional country)

- scene (slc indie, canterbury scene, juggalo, usbm)

- faction (east coast hip hop, west coast rap)

- aesthetic (ninja, complextro, funeral doom)

- politics (riot grrrl, vegan straight edge, unblack metal)

- aspirational identity (viking metal, gangster rap, skinhead oi, twee pop)

- retrospective clarity (protopunk, classic peruvian pop, emo punk)

- jokes that stuck (crack rock steady, chamber pop, fourth world)

- influence (britpop, italo disco, japanoise)

- micro-feud (dubstep, brostep, filthstep, trapstep)

- technology (c64, harp)

- totem (digeridu, new tribe, throat singing, metal guitar)

- isolationism (faeroese pop, lds, wrock)

- editorial precedent (c86, zolo, illbient)

- utility (meditation, chill-out, workout, belly dance)

- cultural (christmas, children's music, judaica)

- occasional (discofox, qawaali, disco polo)

- implicit politics (chalga, nsbm, dangdut)

- commerce (coverchill, guidance)

- assumed listening perspective (beatdown, worship, comic)

- private community (orgcore, ectofolk)

- dominant features (hip hop, metal, reggaeton)

- period (early music, ska revival)

- perspective of provenance (classical (composers), orchestral (performers))

- emergent self-identity (skweee, progressive rock)

- external label (moombahton, laboratorio, fallen angel)

- gender (boy band, girl group)

- distribution (viral pop, idol, commons, anime score, show tunes)

- cultural institution (tin pan alley, brill building pop, nashville sound)

- mechanism (mashup, hauntology, vaporwave)

- radio format (album rock, quiet storm, hurban)

- multiple dimensions (german ccm, hindustani classical)

- marketing (world music, lounge, modern classical, new age)

- performer demographics (military band, british brass band)

- arrangement (jazz trio, jug band, wind ensemble)

- competing terminology (hip hop, rap; mpb, brazilian pop music)

- intentions (tribute, fake)

- introspective fractality (riddim, deep house, chaotic black metal)

- opposition (alternative rock, r-neg-b, progressive bluegrass)

- otherness (noise, oratory, lowercase, abstract, outsider)

- parallel terminology (gothic symphonic metal, gothic americana, gothic post-punk; garage rock, uk garage)

- non-self-explanatory (fingerstyle, footwork, futurepop, jungle)