17 April 2014 to 18 October 2013

In between other tasks at work today, I made some playlists.

¶ New Echoes · 6 March 2014 listen/tech

Music is the thing humans do best, and all the astonishing music in the world, or close enough, is now available online. This is basically more awesome than the grandest future I ever imagined as a kid.

But that's a lot of music. How do we make any kind of sense of it, so that this vast theoretical grandness can have any kind of actual practical significance? How do you listen to anything when you can suddenly hear every noise at once?

Those are questions I am paid to try to help answer. I've been working for a small music-intelligence startup in Somerville called The Echo Nest. We've been running the back-end data-analysis systems that supply recommendations, personalization and music-discovery ideas to a bunch of streaming music services. When I tell people this, they usually say "Like Spotify?" And I say "Yes, like Spotify."

But although we've been working with Spotify in various capacities, and various non-Spotify developers have made applications that combine our things with Spotify's music, we haven't been running the parts of Spotify that we run for other services. This has been an ongoing personal frustration, because Spotify is the most visible on-demand streaming music service in the world, and I've been pretty convinced that we could help them do a dramatically better job.

We are now going to get that chance. The Echo Nest has, in fact, just been wholly acquired by Spotify. Starting today, it's actually my job to try to improve essentially everything about Spotify that matters to me.

And this is only barely the beginning. I think we are, I mean collectively as humanity, only just at the dawn of the era of infinite music. The current streaming-music interaction-models and feature-sets are as much vestiges of our past technical constraints as anything else. It's as if we have jumped from the horse-drawn carriage to the free personal teleporter, suddenly, without the intervening benefit of even basic maps, never mind language translators or cultural history or GPS.

For the world of music to become something we actually inhabit, natively, as opposed to a bunch of awkward phone icons into which we try to contort our curiosity and wonder, or a vast unknown from which we cower and seek familiar comfortable retreats, it's going to take a lot more than "Play me more stuff like Dave Matthews, but do a better job of it." It's going to require that we belatedly render this vast world navigable, and chart it accurately and compellingly, and put sensible enough control panels on the teleporters that you have some prayer of not just constantly zapping yourself 60' deep into an exotic undiscovered faraway cliff face.

So that's what I'm going to be working on now.

[PS: I no longer remember anything memorable or inspiring or even intelligible anybody ever said to introduce my previous acquisitions, but by way of explaining the Echo Nest purchase, Spotify CEO Daniel Ek said this: "At Spotify, we want to get people to listen to more music."]

[PPS: And it's going to take a little while to get Echo Nest + Spotify things actually hooked up and working, but here's some music to listen to in the meantime.]

But that's a lot of music. How do we make any kind of sense of it, so that this vast theoretical grandness can have any kind of actual practical significance? How do you listen to anything when you can suddenly hear every noise at once?

Those are questions I am paid to try to help answer. I've been working for a small music-intelligence startup in Somerville called The Echo Nest. We've been running the back-end data-analysis systems that supply recommendations, personalization and music-discovery ideas to a bunch of streaming music services. When I tell people this, they usually say "Like Spotify?" And I say "Yes, like Spotify."

But although we've been working with Spotify in various capacities, and various non-Spotify developers have made applications that combine our things with Spotify's music, we haven't been running the parts of Spotify that we run for other services. This has been an ongoing personal frustration, because Spotify is the most visible on-demand streaming music service in the world, and I've been pretty convinced that we could help them do a dramatically better job.

We are now going to get that chance. The Echo Nest has, in fact, just been wholly acquired by Spotify. Starting today, it's actually my job to try to improve essentially everything about Spotify that matters to me.

And this is only barely the beginning. I think we are, I mean collectively as humanity, only just at the dawn of the era of infinite music. The current streaming-music interaction-models and feature-sets are as much vestiges of our past technical constraints as anything else. It's as if we have jumped from the horse-drawn carriage to the free personal teleporter, suddenly, without the intervening benefit of even basic maps, never mind language translators or cultural history or GPS.

For the world of music to become something we actually inhabit, natively, as opposed to a bunch of awkward phone icons into which we try to contort our curiosity and wonder, or a vast unknown from which we cower and seek familiar comfortable retreats, it's going to take a lot more than "Play me more stuff like Dave Matthews, but do a better job of it." It's going to require that we belatedly render this vast world navigable, and chart it accurately and compellingly, and put sensible enough control panels on the teleporters that you have some prayer of not just constantly zapping yourself 60' deep into an exotic undiscovered faraway cliff face.

So that's what I'm going to be working on now.

[PS: I no longer remember anything memorable or inspiring or even intelligible anybody ever said to introduce my previous acquisitions, but by way of explaining the Echo Nest purchase, Spotify CEO Daniel Ek said this: "At Spotify, we want to get people to listen to more music."]

[PPS: And it's going to take a little while to get Echo Nest + Spotify things actually hooked up and working, but here's some music to listen to in the meantime.]

¶ We Built This City On · 28 February 2014 listen/tech

My colleague Paul Lamere, at The Echo Nest, has been looking at music listening by listener region and artist: most distinctive artists, favorite artists and anti-preferences.

Here, to go along with that, is a breakdown of music genres by the home cities of the artists responsible:

We Built This City On

Some of these are kind of duh, like "chicago house" being the top genre for Chicago. But as always in data-anything, some obviousness is what gives us the courage to believe the things we didn't already think.

Here, to go along with that, is a breakdown of music genres by the home cities of the artists responsible:

We Built This City On

Some of these are kind of duh, like "chicago house" being the top genre for Chicago. But as always in data-anything, some obviousness is what gives us the courage to believe the things we didn't already think.

On a plane flight to Dallas for the funeral of one of my dearest and nearest-to-life-long friends, I distracted myself from sadness and terror by prodding some music out of my iPad. On the way back home I wrote some words to go with it, fragments of a song of remembrance, or at least of imagination in absentia. Later, after Bethany pointed out that for once I'd written too few words, I wrote some more. Today I sang them, over and over again.

Those of you who have not before listened to any of my own music should be warned that it is cheerfully devoid of technical virtues, but for the moment I have chosen to treat this as a style.

Those of you who did not know Tex are really not at much of a disadvantage for understanding what is going on in the song, but you missed one of the most unforgettable and unmistakable people I ever met, and one who carried me, at times literally, through much of my childhood. He deserves far better than this maudlin, lurching song, but once you're dead you can't do anything about the ways people make up to miss you.

The Heart of the Sky (3:23)

Those of you who have not before listened to any of my own music should be warned that it is cheerfully devoid of technical virtues, but for the moment I have chosen to treat this as a style.

Those of you who did not know Tex are really not at much of a disadvantage for understanding what is going on in the song, but you missed one of the most unforgettable and unmistakable people I ever met, and one who carried me, at times literally, through much of my childhood. He deserves far better than this maudlin, lurching song, but once you're dead you can't do anything about the ways people make up to miss you.

The Heart of the Sky (3:23)

¶ Translation and Significance · 17 December 2013 listen/tech

A couple recent things elsewhere:

- I was interviewed for a new Brazilian arts site called Galileu, and you can read the published translation to Portuguese, or the amusingly incoherent reverse-translation back to English by Google Translate.

- I wrote a short introduction to Every Noise at Once for the stats-in-use site Significance.

- Every Noise at Once itself now has a couple bonus maps for the hottest songs of 2013 and the most retrospectively prescient 2013 finds from The Echo Nest Discovery list.

- I was interviewed for a new Brazilian arts site called Galileu, and you can read the published translation to Portuguese, or the amusingly incoherent reverse-translation back to English by Google Translate.

- I wrote a short introduction to Every Noise at Once for the stats-in-use site Significance.

- Every Noise at Once itself now has a couple bonus maps for the hottest songs of 2013 and the most retrospectively prescient 2013 finds from The Echo Nest Discovery list.

¶ Good Boring Results · 25 November 2013 listen/tech

Paul Lamere wrote a post on Music Machinery yesterday about deep artists, where by "deep" we mean the opposite of one-hit wonders, artists with large, rich catalogs awaiting your listening and exploration. Paul and I both work at The Echo Nest, trying in one way or another to make sense out of the vast amount of music data the company collects.

Often there is more than one sense to be made of the same data. Sometimes many more than one. I liked Paul's intro about one-hit hits as "non-nutritious" music, with "deep" artists as the converse nutritious music, but after that statement of concept, it seemed painfully ironic to me that his calculations resulted in the #1 score for depth going to the Vitamin String Quartet, which I think of as the artificially-fortified sugar-coated cereal of music. Their catalog, like that of the Glee Cast at #3, is certainly vast and consistent. But I wanted to measure a different kind of depth.

So I ran some different calculations, and made a different list. For this one I took 10k or so reasonably well-known artists, and for each artist calculated an inversely-weighted average popularity of their 100 most popular songs excluding their top 10. If Paul's list is the opposite of one-hit-ness, then mine is the opposite of ten-hit-ness. I'm trying to find the artists whose catalogs are not necessarily the most vast, but where the vastness has been explored most rewardingly, artists where their 100th (or 110th) hit is still empirically world-class.

The good news is that this worked. The bad news is that the resulting list is more than a little boring. We might not have been able to guess this ordering, exactly, but very few of these artists below stand out as fundamentally surprising. Yes, yawn, the Beatles at #1. Eminem at #2 hints at a novelty that the rest of the list doesn't really continue to deliver. I hadn't heard of Argentine singer Andrés Calamaro at #33, but he has won a Latin Grammy and sold millions of records, so that's my ignorance. Conversely, I love Nightwish and Ludovico Einaudi, but didn't fully realize how many other people do, too. Otherwise, yeah, you probably knew about these people already.

But sometimes data reveals new truths, and sometimes, like this, it confirms existing ones. And revealing new truths is cooler, but only if the new truths actually have truth to them, and the best way to confirm our ability to generate true truths is to sometimes generate predictable true truths predictably. Anybody who presumes to do data-driven music discovery ought to have to show that they know what the opposite of "discovery" is. If purported math for "nutritious" doesn't mostly start with vegetables you already know you're supposed to be eating (like Paul's has Bach, Vivaldi and Chopin at #2, 4 and 5), don't trust it.

So the occasional boring is OK, even good, and in that good boring spirit, here's my good boring version of the deepest artists:

Often there is more than one sense to be made of the same data. Sometimes many more than one. I liked Paul's intro about one-hit hits as "non-nutritious" music, with "deep" artists as the converse nutritious music, but after that statement of concept, it seemed painfully ironic to me that his calculations resulted in the #1 score for depth going to the Vitamin String Quartet, which I think of as the artificially-fortified sugar-coated cereal of music. Their catalog, like that of the Glee Cast at #3, is certainly vast and consistent. But I wanted to measure a different kind of depth.

So I ran some different calculations, and made a different list. For this one I took 10k or so reasonably well-known artists, and for each artist calculated an inversely-weighted average popularity of their 100 most popular songs excluding their top 10. If Paul's list is the opposite of one-hit-ness, then mine is the opposite of ten-hit-ness. I'm trying to find the artists whose catalogs are not necessarily the most vast, but where the vastness has been explored most rewardingly, artists where their 100th (or 110th) hit is still empirically world-class.

The good news is that this worked. The bad news is that the resulting list is more than a little boring. We might not have been able to guess this ordering, exactly, but very few of these artists below stand out as fundamentally surprising. Yes, yawn, the Beatles at #1. Eminem at #2 hints at a novelty that the rest of the list doesn't really continue to deliver. I hadn't heard of Argentine singer Andrés Calamaro at #33, but he has won a Latin Grammy and sold millions of records, so that's my ignorance. Conversely, I love Nightwish and Ludovico Einaudi, but didn't fully realize how many other people do, too. Otherwise, yeah, you probably knew about these people already.

But sometimes data reveals new truths, and sometimes, like this, it confirms existing ones. And revealing new truths is cooler, but only if the new truths actually have truth to them, and the best way to confirm our ability to generate true truths is to sometimes generate predictable true truths predictably. Anybody who presumes to do data-driven music discovery ought to have to show that they know what the opposite of "discovery" is. If purported math for "nutritious" doesn't mostly start with vegetables you already know you're supposed to be eating (like Paul's has Bach, Vivaldi and Chopin at #2, 4 and 5), don't trust it.

So the occasional boring is OK, even good, and in that good boring spirit, here's my good boring version of the deepest artists:

- The Beatles

- Eminem

- Pink Floyd

- Iron Maiden

- Red Hot Chili Peppers

- Radiohead

- David Bowie

- Metallica

- Depeche Mode

- Bob Dylan

- Muse

- Coldplay

- Green Day

- Korn

- Tom Waits

- Rihanna

- Kanye West

- Megadeth

- Daft Punk

- The Smashing Pumpkins

- Nine Inch Nails

- The Cure

- Madonna

- Linkin Park

- AC/DC

- Beyoncé

- The Rolling Stones

- Queen

- Britney Spears

- The National

- Jay-Z

- Bruce Springsteen

- Andrés Calamaro

- Johnny Cash

- John Mayer

- U2

- blink-182

- Placebo

- Christina Aguilera

- The Offspring

- The Black Keys

- Foo Fighters

- Taylor Swift

- Michael Jackson

- Lady Gaga

- Moby

- Marilyn Manson

- Nightwish

- Mariah Carey

- Jack Johnson

- Black Sabbath

- Nirvana

- Motörhead

- Nick Cave & The Bad Seeds

- Avril Lavigne

- Arctic Monkeys

- Bob Marley

- Judas Priest

- Oasis

- Blur

- Queens of the Stone Age

- Beastie Boys

- Death Cab for Cutie

- Céline Dion

- Gorillaz

- Slayer

- R.E.M.

- Jimi Hendrix

- Rise Against

- Parov Stelar

- Dream Theater

- The White Stripes

- The Doors

- Bad Religion

- In Flames

- Maroon 5

- Beck

- The Killers

- Michael Bublé

- Modest Mouse

- Hans Zimmer

- 2Pac

- Massive Attack

- Elliott Smith

- Bon Jovi

- Robbie Williams

- The Ramones

- Ludovico Einaudi

- Aerosmith

- The Who

- Sting

- Scorpions

- Sufjan Stevens

- Lou Reed

- Ayreon

- Rush

- NOFX

- Paul McCartney

- Neil Young

- Nas

¶ Supercollector · 20 November 2013 listen/tech

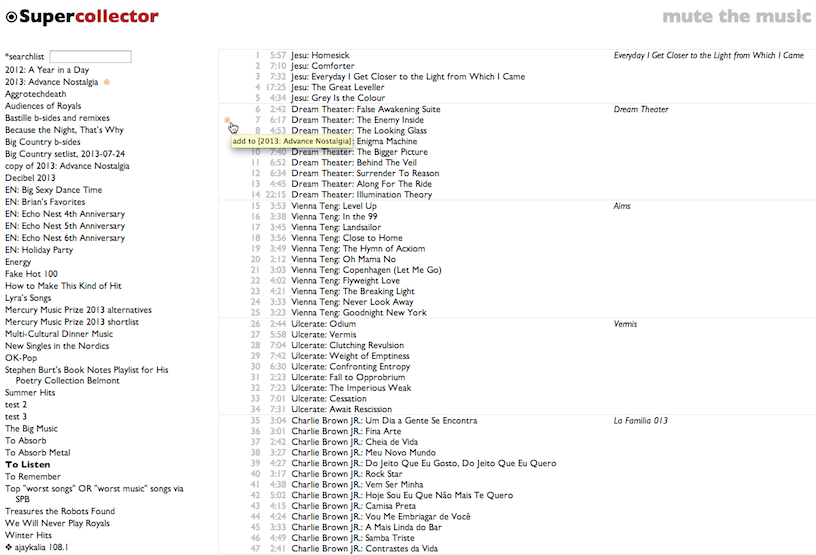

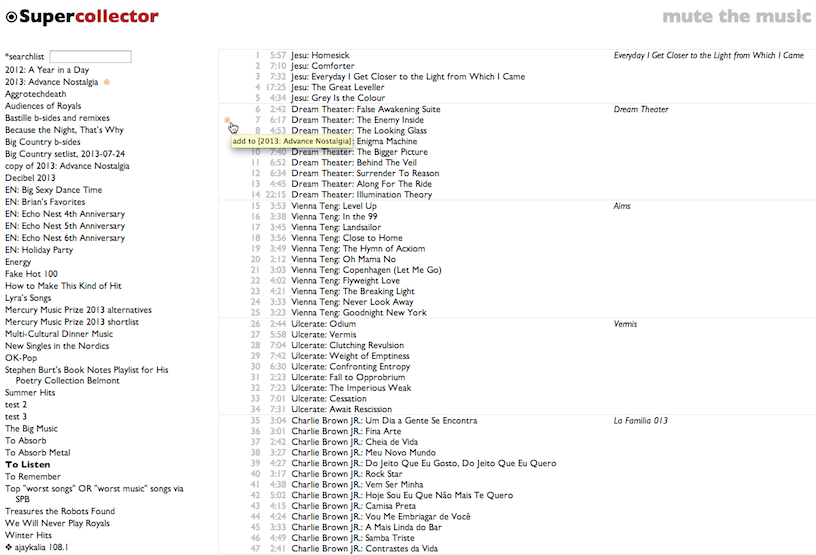

I kind of overprepared for the recent Boston Music Hackday. I ended up presenting a cheerfully simplistic but surprisingly effective automatic chorus-finder, which might conceivably have some future function at work, but that was actually my 5th hack idea, after I accidentally finished the first 4 before hacking was supposed to officially start.

#1, which I accidentally did weeks in advance, was Is This Band Name Taken?, which is pretty literally the simplest possible Echo Nest API application, as it calls a single API function and doesn't even check the result other than to see if there is one, but still got me and the Echo Nest into the Boston Herald yesterday.

#s 2, 3, 4 and 6 (even #5 turned out to require less work than I pessimistically anticipated) all had various things to do with the Rdio API, and eventually I realized that I was actually writing an alternate Rdio client one disconnected feature at a time, so I put them together. And gave the assemblage a name. And you can try it.

It is called Supercollector. I think this is a pretty good name for a music-management application, and I apologize for squandering it on one that I do not expect to have particularly widespread appeal. Rdio's own software is lovely, and a big part of why Rdio is clearly the best streaming music service anybody has so far made, and my construction of an alternative is intended as a minor addition, not any kind of replacement.

That said, there are a variety of fiddly workflow-ish things I do in the course of exploring music, and Supercollector is designed to do those in a ruthlessly streamlined way. It facilitates using one playlist as a candidate list for another. It turns search results into playlists. It turns artist catalogs into playlists. It lets you poke obsessively through playlists with an obsessive minimum of extra pointer movements. It lets you move whole albums into and out of playlists. It replaces playlist tracks that have annoyingly become unavailable since you picked them. It keeps track of your places in multiple playlists at once.

These are obscure needs, but if they also happen to be among your obscure needs, give it a try.

[Obviously you need an Rdio account. I mean, in general. To use Supercollector you probably also need to have some Rdio playlists, otherwise you're going to get a big empty screen with "Supercollector" at the top.]

#1, which I accidentally did weeks in advance, was Is This Band Name Taken?, which is pretty literally the simplest possible Echo Nest API application, as it calls a single API function and doesn't even check the result other than to see if there is one, but still got me and the Echo Nest into the Boston Herald yesterday.

#s 2, 3, 4 and 6 (even #5 turned out to require less work than I pessimistically anticipated) all had various things to do with the Rdio API, and eventually I realized that I was actually writing an alternate Rdio client one disconnected feature at a time, so I put them together. And gave the assemblage a name. And you can try it.

It is called Supercollector. I think this is a pretty good name for a music-management application, and I apologize for squandering it on one that I do not expect to have particularly widespread appeal. Rdio's own software is lovely, and a big part of why Rdio is clearly the best streaming music service anybody has so far made, and my construction of an alternative is intended as a minor addition, not any kind of replacement.

That said, there are a variety of fiddly workflow-ish things I do in the course of exploring music, and Supercollector is designed to do those in a ruthlessly streamlined way. It facilitates using one playlist as a candidate list for another. It turns search results into playlists. It turns artist catalogs into playlists. It lets you poke obsessively through playlists with an obsessive minimum of extra pointer movements. It lets you move whole albums into and out of playlists. It replaces playlist tracks that have annoyingly become unavailable since you picked them. It keeps track of your places in multiple playlists at once.

These are obscure needs, but if they also happen to be among your obscure needs, give it a try.

[Obviously you need an Rdio account. I mean, in general. To use Supercollector you probably also need to have some Rdio playlists, otherwise you're going to get a big empty screen with "Supercollector" at the top.]

¶ The Opposite of Love Is Love · 16 November 2013 listen/tech

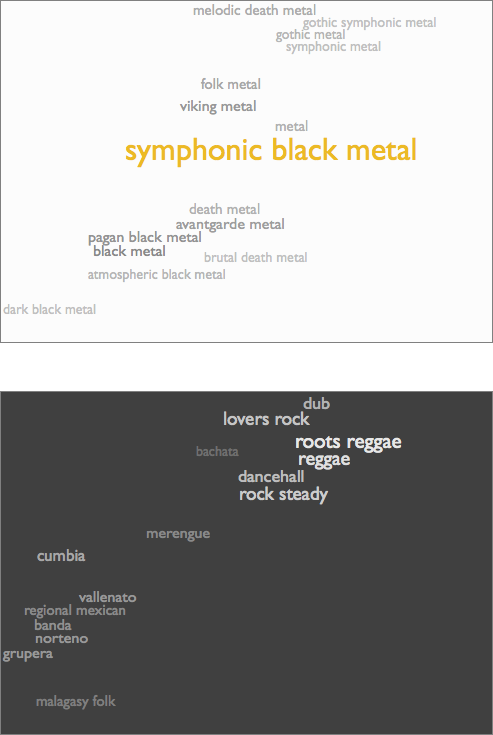

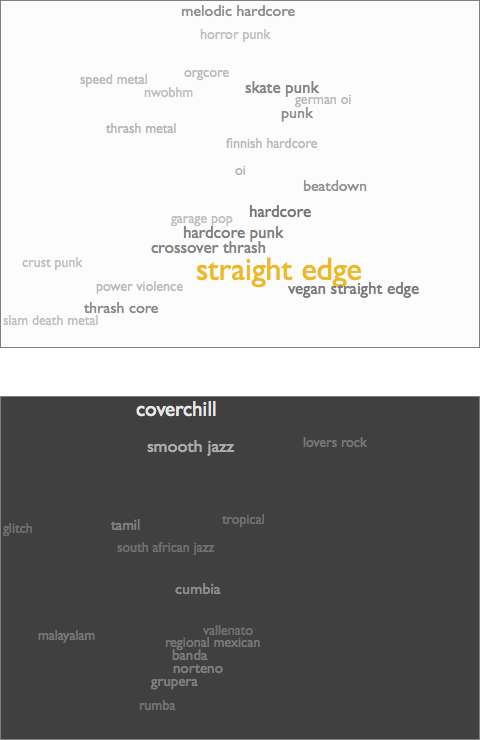

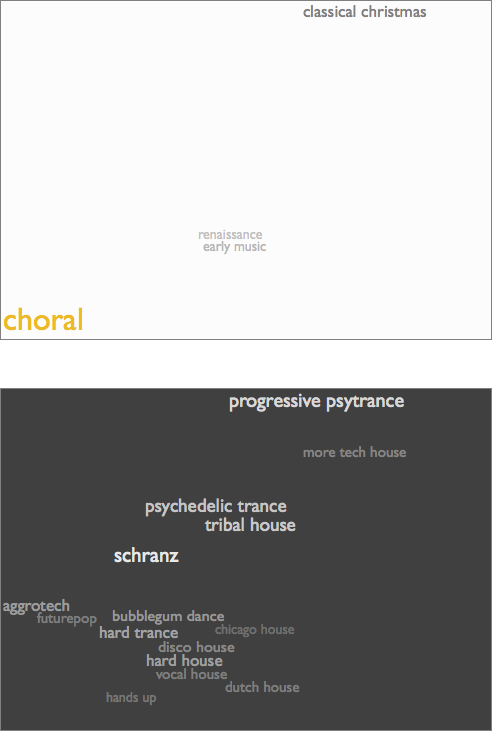

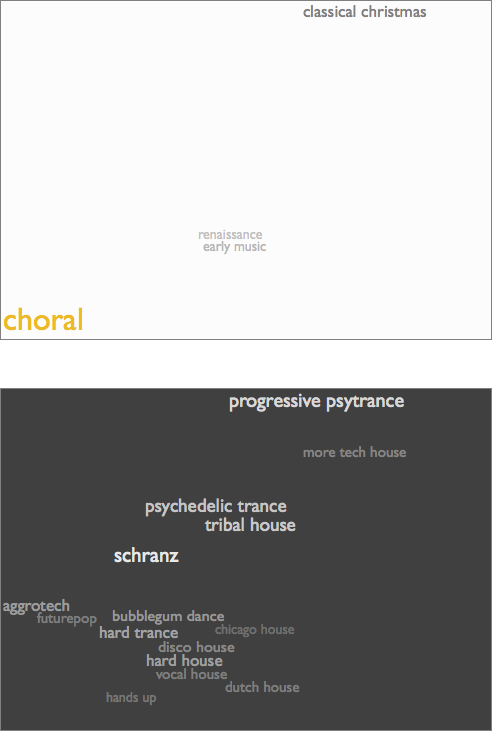

The data and math behind Every Noise at Once allow me to calculate audio similarity between genres, which is pretty obviously cool and useful. The distance scores can be sorted the other way, too, to produce dissimilarity. This works, in the sense that the program doesn't crash before producing results (my first level of quality in computational aesthetics), but it turns out that the farthest genre from pretty much anything is always one of a handful of extreme outliers: choral music, poetry readings, relentless tech house, satanic black metal. This sort of makes sense (my second level of quality), but it isn't very interesting or useful (level three).

It recently occurred to me to try it a different way. Instead of calculating the furthest genre, I instead take each genre's position in the 12-dimensional analytical space, invert it through the origin of the space, and find the genres closest to that inverted point.

Which sounds delightfully abstruse. "12-dimensional analytical space"! But it just means that I'm trying to produce a computable notion of genre opposition that finds some kind of music that is approximately as conventional or extreme as the genre you started with, but in which every individual aspect we can measure has the opposite polarity. So if we have a genre that's a lot faster and slightly louder and somewhat more electric than the average, I look for a genre that's correspondingly a lot slower and slightly quieter and somewhat more acoustic than the average. Except there are 12 metrics, not just 3, which is hard to picture as a space, but no harder to calculate.

This version also doesn't crash, by which I mean that it crashed a little, of course, until I got the right number of "i"s into all the uses of "audiocentricity", and stopped overwriting function declarations with variables (ah, Python...), but now it doesn't, so that's level one.

There's some subjectivity to the question of whether anything under the heading of "opposite of a genre" makes sense. But when I measure that audiocentricity, or how close things are to the theoretical center of the imaginary space, the highest scoring genres include such definitively mild forms as mellow gold, jam band and soft rock, and the lowest scores go to the most recognizably extreme forms (culminating, again, in choral music). And the opposite of choral music turns out to be schranz, and the opposite of symphonic black metal is roots reggae, and the opposite of straight edge is anonymous bossa nova covers of Sting songs. Which feels like it's onto something. So I'm going to call that good enough for level two.

To help find out whether these opposites are interesting or useful, I've added them to my maps. At the bottom of each individual genre page, where there was already a little inset map of the genre's musical neighbors, there's now also an inverted nightside view of the area of the genre's opposites. E.g. this pair for choral music:

In the nearby map you see that there's very little very close to choral music, but tunnel through the center to the other side of the musical universe and you emerge in a glittering midnight riot of relentless motion. Choral music is cool. But manic psytrance is also cool.

Here are symphonic black metal and straight edge:

I'd long believed that I simply can't stand anything resembling reggae, but in fact I turn out to like dancehall a lot. And bachata and vallenato are fabulous, and malagasy folk is kind of like a piece of Africa snapped off and went Caribbean. On the other side, "coverchill" is kind of a grimly fascinating convocation of horribleness, but for me glitch is anti-pop comfort music, and Malayalam is not only a trove of Indian pop, but also the only major human language whose name is a palindrome. And the fact that that little cluster of regional Mexican styles is in some sense the opposite of both symphonic black metal and straight edge is a valuable reminder about the subjective nature of even the magnitudes of distinctions.

But of course the opposite of anything is amazing. The opposite of love is still love. Even the idea of opposition is essentially love undergoing introspection. If you tire, for just a moment, of whatever you already adore, all you have to do is try to look inside yourself and overshoot, and there you are amidst a dozen definitively different things gleefully swarming to enthrall you.

[PS: Level four is to discover something we didn't exactly know yet. Level five is to discover how to measure something we didn't know how to know.]

It recently occurred to me to try it a different way. Instead of calculating the furthest genre, I instead take each genre's position in the 12-dimensional analytical space, invert it through the origin of the space, and find the genres closest to that inverted point.

Which sounds delightfully abstruse. "12-dimensional analytical space"! But it just means that I'm trying to produce a computable notion of genre opposition that finds some kind of music that is approximately as conventional or extreme as the genre you started with, but in which every individual aspect we can measure has the opposite polarity. So if we have a genre that's a lot faster and slightly louder and somewhat more electric than the average, I look for a genre that's correspondingly a lot slower and slightly quieter and somewhat more acoustic than the average. Except there are 12 metrics, not just 3, which is hard to picture as a space, but no harder to calculate.

This version also doesn't crash, by which I mean that it crashed a little, of course, until I got the right number of "i"s into all the uses of "audiocentricity", and stopped overwriting function declarations with variables (ah, Python...), but now it doesn't, so that's level one.

There's some subjectivity to the question of whether anything under the heading of "opposite of a genre" makes sense. But when I measure that audiocentricity, or how close things are to the theoretical center of the imaginary space, the highest scoring genres include such definitively mild forms as mellow gold, jam band and soft rock, and the lowest scores go to the most recognizably extreme forms (culminating, again, in choral music). And the opposite of choral music turns out to be schranz, and the opposite of symphonic black metal is roots reggae, and the opposite of straight edge is anonymous bossa nova covers of Sting songs. Which feels like it's onto something. So I'm going to call that good enough for level two.

To help find out whether these opposites are interesting or useful, I've added them to my maps. At the bottom of each individual genre page, where there was already a little inset map of the genre's musical neighbors, there's now also an inverted nightside view of the area of the genre's opposites. E.g. this pair for choral music:

In the nearby map you see that there's very little very close to choral music, but tunnel through the center to the other side of the musical universe and you emerge in a glittering midnight riot of relentless motion. Choral music is cool. But manic psytrance is also cool.

Here are symphonic black metal and straight edge:

I'd long believed that I simply can't stand anything resembling reggae, but in fact I turn out to like dancehall a lot. And bachata and vallenato are fabulous, and malagasy folk is kind of like a piece of Africa snapped off and went Caribbean. On the other side, "coverchill" is kind of a grimly fascinating convocation of horribleness, but for me glitch is anti-pop comfort music, and Malayalam is not only a trove of Indian pop, but also the only major human language whose name is a palindrome. And the fact that that little cluster of regional Mexican styles is in some sense the opposite of both symphonic black metal and straight edge is a valuable reminder about the subjective nature of even the magnitudes of distinctions.

But of course the opposite of anything is amazing. The opposite of love is still love. Even the idea of opposition is essentially love undergoing introspection. If you tire, for just a moment, of whatever you already adore, all you have to do is try to look inside yourself and overshoot, and there you are amidst a dozen definitively different things gleefully swarming to enthrall you.

[PS: Level four is to discover something we didn't exactly know yet. Level five is to discover how to measure something we didn't know how to know.]

¶ Every Number at Once · 7 November 2013 listen/tech

For the past couple months the Echo Nest blog has been running a series of posts about the changing attributes of popular music over time: danceability, energy, tempo, loudness, acousticness, valence, mechanism (how rigidly consistent a song's tempo stays), organism (a combination of acoustic instrumentation and non-rigid human tempo-fluidity) and bounciness (spikiness and dynamic variation vs atmospheric density).

The series was based on what we refer to as my "research". But what this really means is that one night it occurred to me that I had this pile of code for analyzing the aggregate acoustic characteristics of genres (one byproduct of which is Every Noise at Once), and that if I snipped off the part at the beginning that picked sets of songs by genre, I could feed other sets of songs into it and get other analytical slices through the vast Echo Nest database of songs.

Slicing them by year was pretty obviously interesting. But it was only a few minutes of easy work to also get slices by popularity (which we call hotness) and country, so I did those, too.

The obvious question about any of these analyses is: how sure are we that they actually mean anything? The question maybe seems less pressing in cases where the math indicates a trend that we already suspect exists from our regular experience of the world, like songs increasing in drum-machineyness over the decades since the invention of the drum machine. It hangs more heavily over non-trend assertions like our claims that danceability, valence and tempo have stayed relatively constant over time.

The answer is that I'm pretty sure. And the reason is this table:

This shows you the 12 audio metrics I have been measuring, across the four different analytical song-slices and a fifth one in which I fed to the same process sets of similar size that were actually selected randomly. The numbers are discrimination scores*, with higher numbers indicating that a given metric is better at explaining the differences between that kind of slice. So the high 0.840 for danceability/genre means that on the whole genres mostly tend towards internally consistency in danceability, and that the set of genres covers a relative wide range of danceability values. The low 0.106 for danceability/year, on the other hand, means that the songs from a given year don't tend to exhibit any consistent pattern of danceability, and the aggregate scores don't vary much from year to year.

There's like a small picaresque novel in this table, but here are some of the main things I think it suggests:

- All of these metrics are useful for describing and categorizing musical styles. I picked these 12 for that purpose in the first place, of course, so that's not particularly surprising, but it's confirmation that I haven't been totally wasting my time with something irrelevant.

- Tempo is by far the weakest of these metrics for characterizing genres. There are a few reliably slower (new age, classical) and faster (trance, house) genres, of course, but there are a lot of genres that allow both fast and slow songs with equanimity, and the average tempos for most genres hover around the average tempo for music in general.

- The metrics that show the most discriminatory power over time are organism, acousticness and mechanism. This is kind of 2.5 insights, not 3, since my "organism" score is actually a Euclidean combination of acousticness and (inverse) mechanism. It's not news that popular music has gotten more electrified and quantized over time, of course, but it's minor news that I got computers to reach this conclusion through math, and maybe also news that none of the other trends in music are as quantifiably dramatic.

- The subjective impressions that music is getting louder and more energetic over time (these two aren't wholly independent, either, but loudness is only a small component of energy) are supported by numbers.

- None of these metrics show any particularly notable correlation with hotness. That is, popular songs don't tend to be reliably one way or another along any of these dimensions. Which doesn't by any means prove that there isn't a secret formula for the predictable production of pop hits, but it at least doesn't seem to involve a linear combination of these 12 factors.

- The music produced by people from a country tends to be less distinct on aggregate than the music produced within a genre. That is, maybe, the communities we choose tend to have even stronger identities than the communities into which we are born.

- That last thing said, there is still discernible variation between and among countries. The most discriminatory metric here turns out to be bounciness, which I'm pleased to see since that's one I sort of made up myself. For the record, the bounciest countries are Jamaica, the Dominican Republic, Senegal, Ghana and Mali, and the least bouncy are Finland, Sweden and Norway. Although Denmark is bouncier than Viet Nam, and Russia is bouncier than Guatemala, so it's not strictly a function of latitude.

- All the scores for random slices are far lower than the scores for non-random slices. In fact, even the lowest real score (0.053 for tempo/hotness, indicating that popular songs are by and large no faster or slower than unknown or unpopular songs) is higher than the highest random score. So that gives us a baseline confidence that both the metrics and the non-random slices are registering genuine variation. And helps explain why efficient music discovery is a more complicated problem than "pick a random song that exists and see if you like it".

* For those of you who care, here's how these discrimination scores work. For each metric/slice combination I calculated both the mean and standard deviation across a few thousand relevant songs from Echo Nest data (we have a lot of data, including detailed audio analysis for something like 250 million tracks). The genre slice has more than 700 genres, I used the 64 years from 1950 to 2013, hotness was done in 80 buckets, there were 97 countries for which we had a critical mass of data, and the random slice used 26 random buckets. I then took the standard deviation of the average values for the slice (so a wider spread of averages across the genres/years/whatever means more discrimination) and divided by the average of the standard deviations (so tighter ranges of values within each genre/year/whatever means more discrimination). I feel like I probably didn't invent this, but I couldn't easily find any evidence of its previous existence online.

[And because it seems sad to have a thing about music without some actual music to listen to, I leave you with The Sound of Everything, my autogenerated genre-overview playlist, which has one song for every genre we track, in alphabetical order by genre-name. This grows and changes as the genre map expands, but as of 7 November 2013 it has 756 songs for a total running time of 55:29:28. And that's just one song per genre. Almost any one of these genres could consume your entire life. (Almost. There are some exceptions.)]

[As of 20 May 2016 it has 1454 songs and lasts 105 hours.]

The series was based on what we refer to as my "research". But what this really means is that one night it occurred to me that I had this pile of code for analyzing the aggregate acoustic characteristics of genres (one byproduct of which is Every Noise at Once), and that if I snipped off the part at the beginning that picked sets of songs by genre, I could feed other sets of songs into it and get other analytical slices through the vast Echo Nest database of songs.

Slicing them by year was pretty obviously interesting. But it was only a few minutes of easy work to also get slices by popularity (which we call hotness) and country, so I did those, too.

The obvious question about any of these analyses is: how sure are we that they actually mean anything? The question maybe seems less pressing in cases where the math indicates a trend that we already suspect exists from our regular experience of the world, like songs increasing in drum-machineyness over the decades since the invention of the drum machine. It hangs more heavily over non-trend assertions like our claims that danceability, valence and tempo have stayed relatively constant over time.

The answer is that I'm pretty sure. And the reason is this table:

| # | metric | genre | year | hotness | country | random |

| 1 | danceability | 0.840 | 0.106 | 0.112 | 0.279 | 0.038 |

| 2 | energy | 0.882 | 0.552 | 0.112 | 0.200 | 0.016 |

| 3 | tempo | 0.321 | 0.140 | 0.053 | 0.111 | 0.020 |

| 4 | loudness | 0.897 | 0.474 | 0.125 | 0.183 | 0.020 |

| 5 | acousticness | 0.958 | 0.713 | 0.161 | 0.242 | 0.019 |

| 6 | valence | 0.601 | 0.102 | 0.075 | 0.334 | 0.030 |

| 7 | dynamic range | 0.932 | 0.332 | 0.175 | 0.347 | 0.031 |

| 8 | flatness | 0.681 | 0.319 | 0.143 | 0.168 | 0.020 |

| 9 | beat strength | 0.819 | 0.201 | 0.129 | 0.282 | 0.038 |

| 10 | mechanism | 0.870 | 0.636 | 0.111 | 0.199 | 0.024 |

| 11 | organism | 0.961 | 0.746 | 0.137 | 0.225 | 0.023 |

| 12 | bounciness | 0.911 | 0.274 | 0.159 | 0.329 | 0.033 |

This shows you the 12 audio metrics I have been measuring, across the four different analytical song-slices and a fifth one in which I fed to the same process sets of similar size that were actually selected randomly. The numbers are discrimination scores*, with higher numbers indicating that a given metric is better at explaining the differences between that kind of slice. So the high 0.840 for danceability/genre means that on the whole genres mostly tend towards internally consistency in danceability, and that the set of genres covers a relative wide range of danceability values. The low 0.106 for danceability/year, on the other hand, means that the songs from a given year don't tend to exhibit any consistent pattern of danceability, and the aggregate scores don't vary much from year to year.

There's like a small picaresque novel in this table, but here are some of the main things I think it suggests:

- All of these metrics are useful for describing and categorizing musical styles. I picked these 12 for that purpose in the first place, of course, so that's not particularly surprising, but it's confirmation that I haven't been totally wasting my time with something irrelevant.

- Tempo is by far the weakest of these metrics for characterizing genres. There are a few reliably slower (new age, classical) and faster (trance, house) genres, of course, but there are a lot of genres that allow both fast and slow songs with equanimity, and the average tempos for most genres hover around the average tempo for music in general.

- The metrics that show the most discriminatory power over time are organism, acousticness and mechanism. This is kind of 2.5 insights, not 3, since my "organism" score is actually a Euclidean combination of acousticness and (inverse) mechanism. It's not news that popular music has gotten more electrified and quantized over time, of course, but it's minor news that I got computers to reach this conclusion through math, and maybe also news that none of the other trends in music are as quantifiably dramatic.

- The subjective impressions that music is getting louder and more energetic over time (these two aren't wholly independent, either, but loudness is only a small component of energy) are supported by numbers.

- None of these metrics show any particularly notable correlation with hotness. That is, popular songs don't tend to be reliably one way or another along any of these dimensions. Which doesn't by any means prove that there isn't a secret formula for the predictable production of pop hits, but it at least doesn't seem to involve a linear combination of these 12 factors.

- The music produced by people from a country tends to be less distinct on aggregate than the music produced within a genre. That is, maybe, the communities we choose tend to have even stronger identities than the communities into which we are born.

- That last thing said, there is still discernible variation between and among countries. The most discriminatory metric here turns out to be bounciness, which I'm pleased to see since that's one I sort of made up myself. For the record, the bounciest countries are Jamaica, the Dominican Republic, Senegal, Ghana and Mali, and the least bouncy are Finland, Sweden and Norway. Although Denmark is bouncier than Viet Nam, and Russia is bouncier than Guatemala, so it's not strictly a function of latitude.

- All the scores for random slices are far lower than the scores for non-random slices. In fact, even the lowest real score (0.053 for tempo/hotness, indicating that popular songs are by and large no faster or slower than unknown or unpopular songs) is higher than the highest random score. So that gives us a baseline confidence that both the metrics and the non-random slices are registering genuine variation. And helps explain why efficient music discovery is a more complicated problem than "pick a random song that exists and see if you like it".

* For those of you who care, here's how these discrimination scores work. For each metric/slice combination I calculated both the mean and standard deviation across a few thousand relevant songs from Echo Nest data (we have a lot of data, including detailed audio analysis for something like 250 million tracks). The genre slice has more than 700 genres, I used the 64 years from 1950 to 2013, hotness was done in 80 buckets, there were 97 countries for which we had a critical mass of data, and the random slice used 26 random buckets. I then took the standard deviation of the average values for the slice (so a wider spread of averages across the genres/years/whatever means more discrimination) and divided by the average of the standard deviations (so tighter ranges of values within each genre/year/whatever means more discrimination). I feel like I probably didn't invent this, but I couldn't easily find any evidence of its previous existence online.

[And because it seems sad to have a thing about music without some actual music to listen to, I leave you with The Sound of Everything, my autogenerated genre-overview playlist, which has one song for every genre we track, in alphabetical order by genre-name. This grows and changes as the genre map expands, but as of 7 November 2013 it has 756 songs for a total running time of 55:29:28. And that's just one song per genre. Almost any one of these genres could consume your entire life. (Almost. There are some exceptions.)]

[As of 20 May 2016 it has 1454 songs and lasts 105 hours.]

¶ Is This Band Name Taken? · 18 October 2013 listen/tech

Forming a band? About to pick a name for it? Help the world out and visit this first:

IS THIS BAND NAME TAKEN?

IS THIS BAND NAME TAKEN?